Evaluation of the 2009 Policy on Evaluation

Acknowledgements

This evaluation was conducted by the Centre of Excellence for Evaluation of the Treasury Board of Canada Secretariat and by an external consulting team composed of Natalie Kishchuk, CE, of Program Evaluation and Beyond Inc. and Benoît Gauthier, CE, of Circum Network Inc. The Centre of Excellence for Evaluation produced this final report, for which the external consultants provided a quality assurance review.

The Centre of Excellence for Evaluation wishes to thank the members of the advisory committees, who provided advice on the planning, conduct and reporting of the evaluation.

Table of Contents

- Executive Summary

- 1.0 Introduction

- 2.0 Evaluation Approach and Design

- 3.0 Findings

- 4.0 Conclusions

- 5.0 Recommendations

- Appendix A: Evolution of Evaluation in the Federal Government and the Context for Policy Renewal in 2009

- Appendix B: Implementation Review of the 2009 Policy on Evaluation

- Appendix C: Purpose of the Evaluation of the 2009 Policy on Evaluation, Methodology and Governance Committees for the Evaluation

- Appendix D: Contribution Theory of the 2009 Policy on Evaluation, Generic Evaluation Process Map of the Life Cycle of a Departmental Evaluation, and Logic Model for Implementing the 2009 Policy on Evaluation

- Endnotes

Executive Summary

Background

In 2013–14, the Treasury Board of Canada Secretariat conducted an evaluation of the 2009 Policy on Evaluation. The evaluation assessed the performance of the policy; established a baseline of policy results—in particular, those related to evaluation use and utility; and identified opportunities to better support departments in meeting their evaluation needs through flexible application of the policy and the associated directive and standard. This report documents the evaluation's key findings, conclusions and recommendations.

Methodology

The evaluation team was composed of external consultants as well as analysts from the Secretariat's Centre of Excellence for Evaluation. The external team members assessed policy performance, and the internal team members assessed policy application. The evaluation used both qualitative methods (case studies, process mapping, document and literature reviews, and stakeholder consultations with deputy heads, assistant deputy ministers, central agencies and others) and quantitative methods (analyses of monitoring data and surveys of program managers, evaluation managers and evaluators). The external and internal evaluation team members provided a challenge function for each other's work and assured the quality of evaluation products.

Findings and Conclusions

Performance of the Policy and Status of Policy Outcomes

Regarding the performance of the policy, the evaluation found the following:

- In general, the evaluation needs of deputy heads and senior managers were well served under the 2009 Policy on Evaluation. Senior management was able to draw strategic insights to support higher-level decision making. At the same time, efforts to meet the policy's coverage requirements sometimes made evaluation units less able to respond to senior management's emerging needs.

- The policy had an overall positive influence on meeting program managers' needs, and first-time evaluations of some programs were useful. However, program managers whose programs were evaluated as part of a broad program cluster or high-level Program Alignment Architecture entity sometimes found that their needs were not met as well as before 2009, when the program was evaluated on its own.

- Central agencies found that evaluations were increasingly available, and they and departments increasingly used evaluations to inform expenditure management activities such as spending proposals (in particular program renewals) and spending reviews. At the same time, evaluations often did not meet central agencies' needs for information about program efficiency and economy.

- Evaluation use under the 2009 policy was extensive, but use and impact could be improved by ensuring that the evaluations undertaken, and their timing, scopeFootnote 1 and focus,Footnote 2 closely align with the needs of users.

- The main use of evaluations was to support policy and program improvement.

- The increased use of evaluations to support decision making was enabled by an observed government-wide culture shift toward valuing and using evaluations.

- The factors that had the most evident positive influence on evaluation use in departments were policy elements related to governance and leadership of the evaluation function, whereas the factors that most evidently hindered evaluation use were those related to resources and timelines.

- Despite concerns about their capacity to meet all policy requirements, departments generally planned and expected to meet all requirements in the current five-year period.

Relevance and Impact of the Three Major Policy Requirements

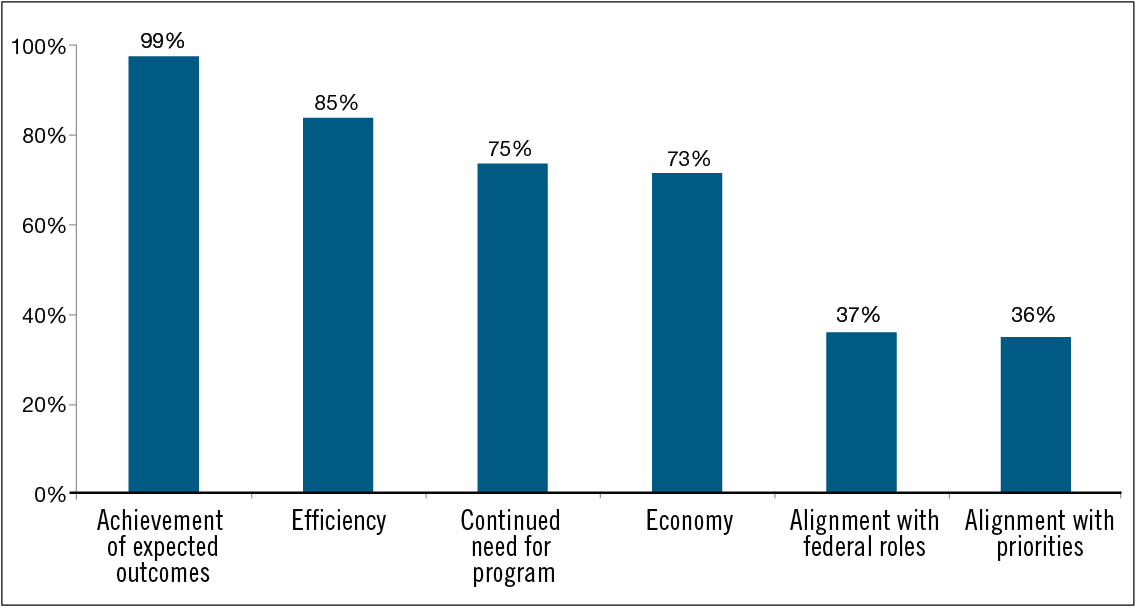

Regarding the relevance and impact of the three major policy requirements (comprehensive coverage of direct program spending, five-year frequency for evaluations, and examination of the five core issuesFootnote 3), the evaluation found the following:

- Challenges in implementing comprehensive coverage stemmed from the combined demands of the three key policy requirements (comprehensive coverage of direct program spending, five-year frequency for evaluations, and examination of the five core issues), along with the context of limited resources for conducting evaluations. The five-year frequency requirement appeared to be central to the implementation challenges in most departments.

- Stakeholders at all levels recognized the benefits of comprehensive coverage for encompassing the needs of all evaluation users and for serving all purposes targeted by the policy. Nevertheless, there were clear situations where individual evaluations had low utility.

- The five-year frequency for evaluations demonstrated benefits and drawbacks that varied according to the nature of programs and the needs of users. To optimize evaluation utility for a given program, a longer, shorter or adjustable frequency may be required.

- In combination with the comprehensive coverage requirement, the five-year frequency limited the flexibility of evaluation units to respond to emerging or higher-priority information needs.

- In general, the five core issues covered the appropriate range of issues and provided a consistent framework that allowed for comparability and analysis of evaluations within and across departments, as well as across time. However, the perceived pertinence of some core issues varied by evaluation and by type of evaluation user.

- Longstanding inadequacies in the availability and quality of program performance measurement data and incompatibly structured financial data continued to limit evaluators in providing assessments of program effectiveness, efficiency (including cost-effectiveness) and economy. Central agencies and senior managers desired, in particular, more and better information on program efficiency and economy.

Approaches Used to Measure Policy Performance

Regarding the approaches used to measure policy performance, the evaluation found the following:

- Mechanisms for measuring policy performance tracked the obvious uses of evaluations—those that were direct and more immediate—but did not capture the range of indirect, long-term or more strategic uses, and may not have given a robust perspective on the usefulness of evaluations.

Other Findings

The evaluation also found the following:

- The requirements of the Policy on Evaluation and those of other forms of oversight and review, such as internal audit, created some overlap and burden.

Conclusions

The 2009 Policy on Evaluation helped the government-wide evaluation function play a more prominent role in supporting the Expenditure Management System. The policy also supported uses such as program and policy improvement, accountability and public reporting. Strong engagement from deputy heads and senior managers in the governance of the evaluation function promoted the utility of evaluations, and the evaluation needs of deputy heads, senior managers and central agencies were well served. In some cases, but not systematically across departments, evaluation functions produced horizontal analyses that contributed to useful cross-program learning, informing improvements both to the program evaluated and to other programs and to the organization as a whole. However, in assessing program effectiveness, efficiency and economy in evaluations, departmental functions were often limited by inadequacies in the availability and quality of performance measurement data and by incompatibly structured financial data.

The findings showed that while there was a general belief that all government spending should be evaluated periodically, there was also a widely held view that the potential for individual evaluations to be used should influence their conduct. Further, the policy requirements for evaluation timing and focus did not leave sufficient flexibility for departmental evaluation functions to fully reflect the needs of users in evaluation planning or to respond to emerging priorities. Evaluation needs were found to vary among different user groups (in particular, the needs of central agencies and departments were somewhat different). However, to fulfill coverage requirements within their resource constraints, departments sometimes chose evaluation strategies (for example, clustering programs for evaluation purposes) that were economical but that ultimately served a narrower range of users' needs. The lack of flexibility in the coverage and frequency requirements also made it challenging for departments to coordinate evaluation planning with other oversight functions in order to maximize the usefulness of evaluations and minimize program burden.

Recommendations

The evaluation recommends that when developing a renewed Policy on Evaluation for approval by the Treasury Board, the Treasury Board of Canada Secretariat should:

- Reaffirm and build on the 2009 Policy on Evaluation's requirements for the governance and leadership of departmental evaluation functions, which demonstrated positive influences on evaluation use in departments.

- Add flexibility to the core requirements of the 2009 Policy on Evaluation and require departments to identify and consider the needs of the range of evaluation user groups when determining how to periodically evaluate organizational spending (including the scope of programming or spending examined in individual evaluations), the timing of individual evaluations, and the issues to examine in individual evaluations.

- Work with stakeholders in departments and central agencies to establish criteria to guide departmental planning processes so that all organizational spending is considered for evaluation according to the core issues; that the needs of the range of key evaluation users, both within and outside the department, are understood and used to drive planning decisions; that the planned activities of other oversight functions are taken into account; and that the rationale for choices related to evaluation coverage and to the scope, timing and issues addressed in individual evaluations is transparent in departmental evaluation plans.

- Engage the Secretariat's policy centres that guide departments in the collection and structuring of performance measurement data and financial management data in order to develop an integrated approach to better support departmental evaluation functions in assessing program effectiveness, efficiency and economy.

- Promote practices, within the Secretariat and departments, for undertaking regular, systematic cross-cutting analyses on a broad range of completed evaluations and using these analyses to support organizational learning and strategic decision making across programs and organizations. In this regard, the Treasury Board of Canada Secretariat should facilitate government-wide sharing of good practices for conducting and using cross-cutting analyses.

1.0 Introduction

1.1 Purpose of the Evaluation of the Policy on Evaluation

The 2009 Policy on Evaluation requires its own evaluation every five years.

The objectives of the evaluation were to:

- Assess the application and performance (effectiveness, efficiency and economy) of the policy and develop a baseline of results—in particular, those related to the use and utility of evaluation; and

- Identify opportunities to better support departments in meeting their evaluation needs through flexible application of the policy and the associated directive and standard.

This evaluation will inform the Treasury Board of Canada Secretariat in fulfilling its responsibilities to develop policy and to lead the government-wide evaluation function.

1.2 Background and Context

1.2.1 Evolution of the Federal Policy on Evaluation and the Context for Policy Renewal in 2009

The federal government has had central evaluation policies in place since 1977. Before the 2009 Policy on Evaluation, federal policies positioned the evaluation function to inform the management and improvement of programs, primarily from a program manager's perspective. In response to the increasing need for neutral, credible evidence on the value for money of government spending, the 2009 policy broadened the policy focus to include a more prominent role for the evaluation function in supporting the Expenditure Management System. Further, the policy situated the head of evaluation as a strategic advisor to the deputy head on the relevance and performance of departmental programs. Factors that led to refocusing the evaluation function included:

- The 2006 legislated requirement (Financial Administration Act, section 42.1) for all ongoing programs of grants and contributions to be reviewed for relevance and effectiveness every five years;

- The 2007 renewal of the Expenditure Management System, which was aligned with the Auditor General's recommendations of ,Footnote 4 Budget 2006 commitments, and recommendations of the Standing Committee on Public AccountsFootnote 5 (adopted by the Standing Committee in Footnote 6) on positioning evaluation to better support expenditure management decision making; and

- The advent of strategic and other spending reviews, which increased the demand for evaluations to provide information about program relevance and performance.

Coverage requirements existed in all previous federal evaluation policies and ranged from ensuring that all programs were evaluated periodically to considering, but not requiring, evaluation of all programs. The 2009 policy requires evaluations of all direct program spending.Footnote 7 Similarly, a frequency of evaluation was consistently specified in federal evaluation policies; however, this frequency varied from every three years to every six years. It is now every five years under the 2009 policy. Further, all federal evaluation policies included a set of issues to be addressed in evaluations. Since 1992 these issues have been consistent, requiring evaluations to examine the relevance, effectiveness and efficiency of programs. However, a notable change in 2009 was that core evaluation issues were no longer discretionary; the 2009 policy makes it mandatory for evaluations to address five core issues in order to meet coverage requirements.

For more information on the evolution of evaluation in the federal government, see Appendix A.

1.2.2 The International Context for Evaluation

Internationally, as governments undertook cost-cutting and cost-containment exercises in recent years, several countries expanded evaluation coverage and took steps to improve evaluation quality and to emphasize the use of evaluation in decision making. For example, the United Kingdom and the United States took steps to bolster the use of evaluation evidence in determining whether program spending is effective and provides value for money. The United Kingdom's guidance on evaluation for federal departments and agenciesFootnote 8 indicates that with specific exceptions all policies, programs and projects should be comprehensively evaluated and that the risk of not evaluating is not knowing whether interventions are effective or delivering value for money. In the United States, evaluations are promoted as a means to “help the Administration determine how to spend taxpayer dollars effectively and efficiently—investing more in what works and less in what does not.”Footnote 9

1.2.3 Introduction of the 2009 Policy on Evaluation

The current federal Policy on Evaluation was introduced on , replacing the 2001 Evaluation Policy. The objective of the 2009 policy is to create a comprehensive and reliable base of evaluation evidence that is used to support policy and program improvement, expenditure management, Cabinet decision making and public reporting. To meet this objective, the policy strengthened requirements for evaluation coverage; for the assessment of the value for money of programs; for the quality and timeliness of evaluations and the neutrality of the function; and for evaluation capacity in departments. In its report on Chapter 1, “Evaluating the Effectiveness of Programs,” of the Fall 2009 Report of the Auditor General of Canada, the Standing Committee on Public Accounts expressed support for the direction of the new policy by stating, “Effectiveness evaluations are very important for making good, informed decisions about program design and where to allocate resources. The Committee has long encouraged the development of effectiveness evaluation within the federal government and is pleased that the government has strengthened the requirements for evaluation.”

The 2009 policy and the associated directive and standard do the following:

- Establish evaluation as a function led by the deputy head, with a neutral departmental governance structure;

- Require comprehensive coverage of direct program spendingFootnote 10 every five years;

- Articulate core issues of program relevance and performance that must be addressed in all evaluations (see Appendix A, Table 2);

- Introduce requirements for program managers to develop and implement ongoing performance measurement strategies;

- Set competency requirements for departmental heads of evaluation;

- Set quality standards for individual evaluations; and

- Require that evaluation reports be made easily available to Canadians in a timely manner.

The 2009 Policy on Evaluation and Directive on the Evaluation Function introduced flexibilities to help departments achieve comprehensive coverage.

Because of the significant changes introduced by the policy, and on the advice of an advisory committee of deputy headsFootnote 11 in 2008, a four-year phased implementation was adopted to give departments time to build their capacity for achieving comprehensive evaluation coverage. During this transition period, departments could use a risk-based approach to choose which components of direct program spending to evaluate. The transition period did not apply to ongoing programs of grants and contributions, which had to be evaluated every five years in accordance with the 2006 legal requirement.

Following the transition period, which ended on , all direct program spending became subject to evaluation, and by , the requirement for comprehensive coverage will need to be met for the first time. As stipulated in Annex A of the Directive on the Evaluation Function, departments could consider risk, program characteristics and other factorsFootnote 12 when choosing evaluation approaches and when calibrating the methods and the level of effort applied to each evaluation. For example, calibrating an evaluation to expend less effort could entail:

- Selecting fewer and more targeted evaluation questions to examine the core value-for-money issues, or to focus on known problem areas of the program;

- Choosing a streamlined evaluation approach and a design with a shortened timeline;

- Calibrating the methods used and the level of effort by leveraging existing data instead of collecting new data whenever possible; by using smaller sample sizes; by using lower-cost interviewing methods (such as online or telephone instead of in-person, or clusters of in-person interviews to limit travel costs); or by conducting fewer case studies.

Departments could also adjust the scope of evaluations by grouping programs rather than evaluating programs individually.

1.3 Overview of the Federal Evaluation Function

Under the 2009 Policy on Evaluation, evaluation serves various users, including deputy heads, central agencies, program managers, ministers, parliamentarians and Canadians. Evaluations support various uses, including policy and program improvement, expenditure management, Cabinet decision making and public reporting.

As examples of users and uses, evaluations may inform program managers about improvements to programs and proposals for program renewal or redesign (including Treasury Board submissions); support deputy heads in allocating resources across programs; support central agencies in playing their “challenge function” as they analyze and provide advice on Treasury Board submissions, Memoranda to Cabinet, and spending review proposals; and assist departments in reporting to parliamentarians and Canadians on program results.

Responsibilities for establishing and sustaining a strong federal evaluation function are shared. While the responsibility for conducting evaluations rests with individual departments and agencies, the Secretary of the Treasury Board plays a leadership role for the whole function, supported by the Centre of Excellence for Evaluation of the Treasury Board of Canada Secretariat. In leading the government-wide evaluation function, the Secretariat:

- Supports departments in implementing the 2009 Policy on Evaluation;

- Encourages the development and sharing of effective evaluation practices across departments;

- Supports capacity-building initiatives in the government-wide evaluation function;

- Monitors and reports annually to the Treasury Board on government-wide evaluation priorities and the health of the evaluation function; and

- Develops policy recommendations for the Treasury Board.

The Policy on Evaluation mandates key roles and structures for leading and governing departmental evaluation functions, as well as tools for planning their activities. These include the role of the head of evaluation as the departmental lead for evaluation and strategic advisor to the deputy head; the role of the departmental evaluation committee in advising the deputy head and facilitating the use of evaluation; and the departmental evaluation plan as a tool for expressing plans and priorities and assisting the coordination of evaluation and performance measurement needs. In small departments and agencies, deputy heads lead the evaluation function. They are required to designate a head of evaluation, but they are not required to establish departmental evaluation committees or to develop departmental evaluation plans.

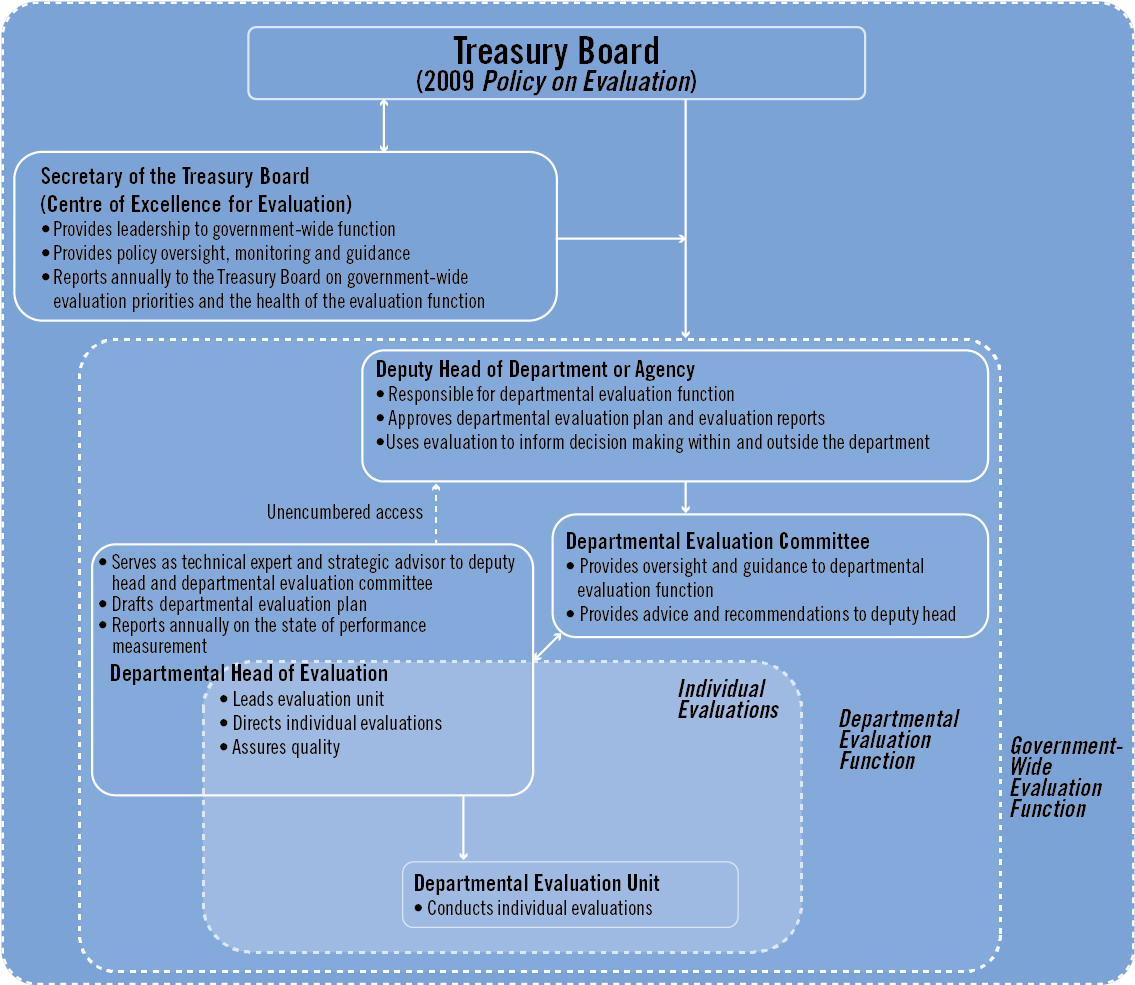

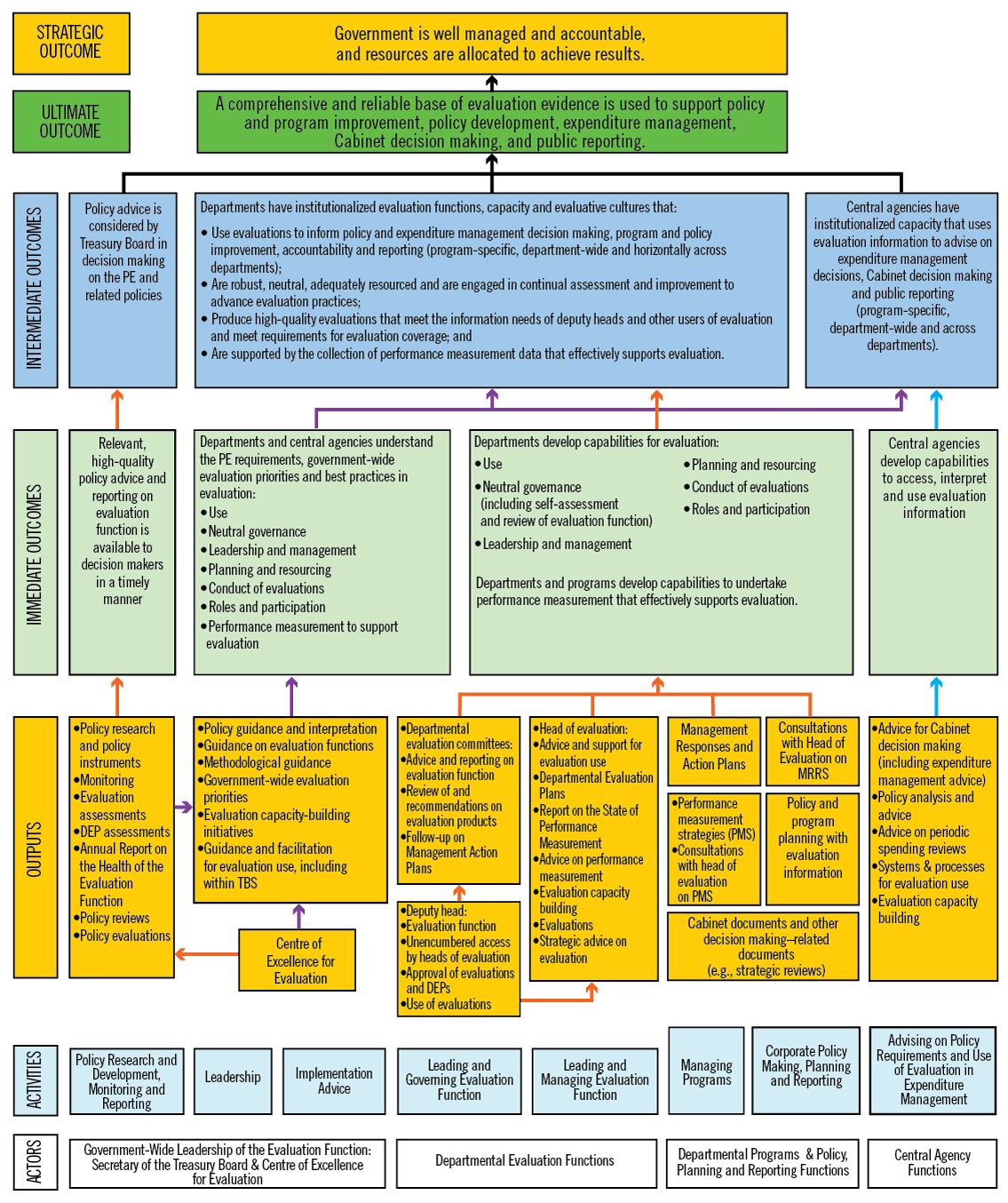

Figure 1 depicts the structure of the federal evaluation function and key roles and responsibilities, from the perspective of a large department or agency.

Figure 1 - Text version

The figure shows the structure of the federal evaluation function in a large department or agency, including the governance structures and responsibilities of individuals and organizations, from three nested perspectives. The three perspectives, from narrowest to widest, are: individual evaluations, individual departmental evaluation functions and the government-wide evaluation function.

From the perspective of individual evaluations, departmental evaluation units are responsible for conducting evaluations. As the leader of the departmental evaluation unit, the departmental head of evaluation directs individual evaluations and assures their quality.

From the perspective of the departmental evaluation function, the deputy head has overall responsibility for the departmental evaluation function, approves the departmental evaluation plan and individual evaluation reports, and uses evaluations to inform decision making within and outside the department or agency. The departmental evaluation committee provides oversight and guidance to the departmental evaluation function and provides advice and recommendations to the deputy head. The departmental head of evaluation serves as a technical expert and a strategic advisor to the deputy head and the departmental evaluation committee, drafts the departmental evaluation plan, and reports annually on the state of performance measurement. The departmental head of evaluation has unencumbered access to the deputy head on evaluation matters.

From the government-wide perspective, the Treasury Board sets government-wide policy for the federal evaluation function through the 2009 Policy on Evaluation. The policy establishes the role and responsibilities for the Secretary of the Treasury Board for providing leadership to the government-wide function. Supported by the Centre of Excellence for Evaluation, the Secretary provides policy oversight, monitoring and guidance, and reports annually to the Treasury Board on government-wide evaluation priorities and the health of the evaluation function.

1.4 Implementation of the Policy on Evaluation

After the introduction of the 2009 policy, the Treasury Board of Canada Secretariat continually monitored and reported on the policy's implementation. To identify issues, the Secretariat completed an Implementation Review in 2013 that examined the four-year policy transition period before full implementation of five-year comprehensive evaluation coverage.

Taken together, the Implementation Review and the Secretariat's Annual Reports on the Health of the Evaluation Function from 2010 to 2012 showed that departments had made solid progress during the policy's four-year transition period in establishing governance structures for the function (for example, departmental evaluation committees and heads of evaluation), building evaluation capacity, increasing evaluation coverage, planning for comprehensive coverage, and using evaluation to support decision making.

When introducing the 2009 policy, the Secretariat projected that departments would need to increase investment in the evaluation function to achieve and sustain comprehensive evaluation coverage every five years; however a period of government-wide spending reviews followed. Table 1 shows the number of evaluations and the resources allocated to them during the last two years of the 2001 policy and the four-year transition period of the 2009 policy, for large departments and agencies in the Government of Canada.

The Secretariat's monitoring showed that government-wide financial resources for the function were roughly stable until 2011–12 and then declined. However, the number of full-time equivalents dedicated to the function rose slightly compared with 2008–09 (the last year of the 2001 policy), apparently by reallocating budgets for professional services to salaries.

| 2001 Evaluation Policy | 2009 Policy on Evaluation (transition period) |

|||||

|---|---|---|---|---|---|---|

Table 1 NotesSource: Capacity Assessment Surveys and Treasury Board of Canada Secretariat monitoring.

|

||||||

| Fiscal year | 2007–08 | 2008–09 | 2009–10 | 2010–11 | 2011–12 | 2012–13 |

| Number of evaluations | 121 | 134 | 164 | 136 | 146 | 123 |

| Full-time equivalents | 409 | 418 | 474 | 459 | 477 | 459 |

| Financial resources ($ millions) | ||||||

| Salary | 28.4 | 32.3 | 37.1 | 38.2 | 39.0 | 40.8 |

| Operations and maintenance | 17.9 | 4.4 | 5.0 | 4.3 | 4.6 | 3.8 |

| Professional services | 4.2 | 20.5 | 19.1 | 17.6 | 14.3 | 11.6 |

| Other | 6.7table 1 note 3 † | 3.7table 1 note 4 †† | 5.8table 1 note 4 †† | 0.3table 1 note 4 †† | 2.2table 1 note 4 †† | Not applicabletable 1 note 5 § |

| Total financial resourcestable 1 note 6 §§ | 57.3 | 60.9 | 66.9 | 60.2 | 60.2 | 56.2 |

Although the number of evaluations produced in the final year of the four-year transition period (123) was less than the number produced in the first year (164), evaluation reports produced in 2012–13 covered greater amounts of direct program spending than they had covered before 2009–10. In 2012–13, the average evaluation covered approximately $78 million in direct program spending, compared with an average of $44 million covered per evaluation in 2008–09. Thus evaluation information was available for a greater amount of direct program spending government-wide under the 2009 policy than under the 2001 policy.

The Implementation Review found that departments used one or more of the following strategies to expand evaluation coverage within their budgeted resources, including:

- Clustering programs for evaluation purposes;

- Calibrating the effort devoted to evaluation projects;

- Relying more on internal evaluators; and

- Minimizing non-evaluation activities.

For a summary of the findings from the Implementation Review, see Appendix B.

1.5 Context for Policy Renewal in 2014

The evaluation of the 2009 Policy on Evaluation was carried out during the same period as a separately conducted assessment of the Policy on Management, Resources and Results Structures. Together, these exercises provided input to a broader policy dialogue that sought opportunities for improving both policies.

2.0 Evaluation Approach and Design

2.1 Approach and Design

The evaluation used a largely goal-based learning approach aimed at determining the degree to which policy objectives were met and why, as well as a contribution analysis (theory-driven) model to identify and test the assumptions and mechanisms of the policy. The approach was also a collaborative one, in that the evaluation team included external consultants as well as analysts from the Treasury Board of Canada Secretariat's Centre of Excellence for Evaluation, which is the unit responsible for developing and making policy recommendations to the Treasury Board. The external team members assessed the performance of the Policy on Evaluation, established a baseline of results, and assessed the approaches that departments and the Secretariat used to measure the policy's performance. The internal team members examined the application of policy requirements and explored opportunities for adding flexibility. The external and internal evaluation team members provided a challenge function for each other's work and assured the quality of evaluation products.

The evaluation used various research designs, including multiple case studies, interrupted time series, retrospective pretestsFootnote 13 and descriptive elements.

2.2 Methodology

The evaluation used the following methods:

- Policy performance case studies of 10 departments and agencies to qualitatively analyze evaluation use, using a total of 28 evaluations conducted across these departments. Eighty six key informant interviews were conducted with heads and directors of evaluation, evaluation team members, managers of evaluated programs, departmental evaluation committee members and central agency officials. Case studies also involved document reviews;

- Policy application case studies of six types of programs or categories of spending, using 24 examples from departments and agencies, to qualitatively analyze the relevance of key policy requirements and identify opportunities for flexibility in the requirements for comprehensive coverage of direct program spending, five-year frequency for evaluations, and examination of the five core issues. For the case studies, 39 consultations were conducted with departmental program managers and evaluation professionals, and 8 consultations were conducted with central agency representatives. The six types of programs or spending categories were:

- Assessed contributions to international organizations;Footnote 14

- Endowment funding;Footnote 15

- Programs with a requirement for recipient-commissioned independent evaluations;Footnote 16

- Low-risk programs;

- Programs with a long horizon to results achievement;

- Other programs identified by departments as challenging for policy application;

- Consultations with 35 heads of evaluation, or their delegates, in small group settings;

- Online surveys of 115 program managers and 153 evaluation managers and evaluators;

- Descriptive and inferential statistical analyses on policy monitoring data previously collected by the Centre of Excellence for Evaluation (Capacity Assessment Survey and Management Accountability Framework Assessment Results);

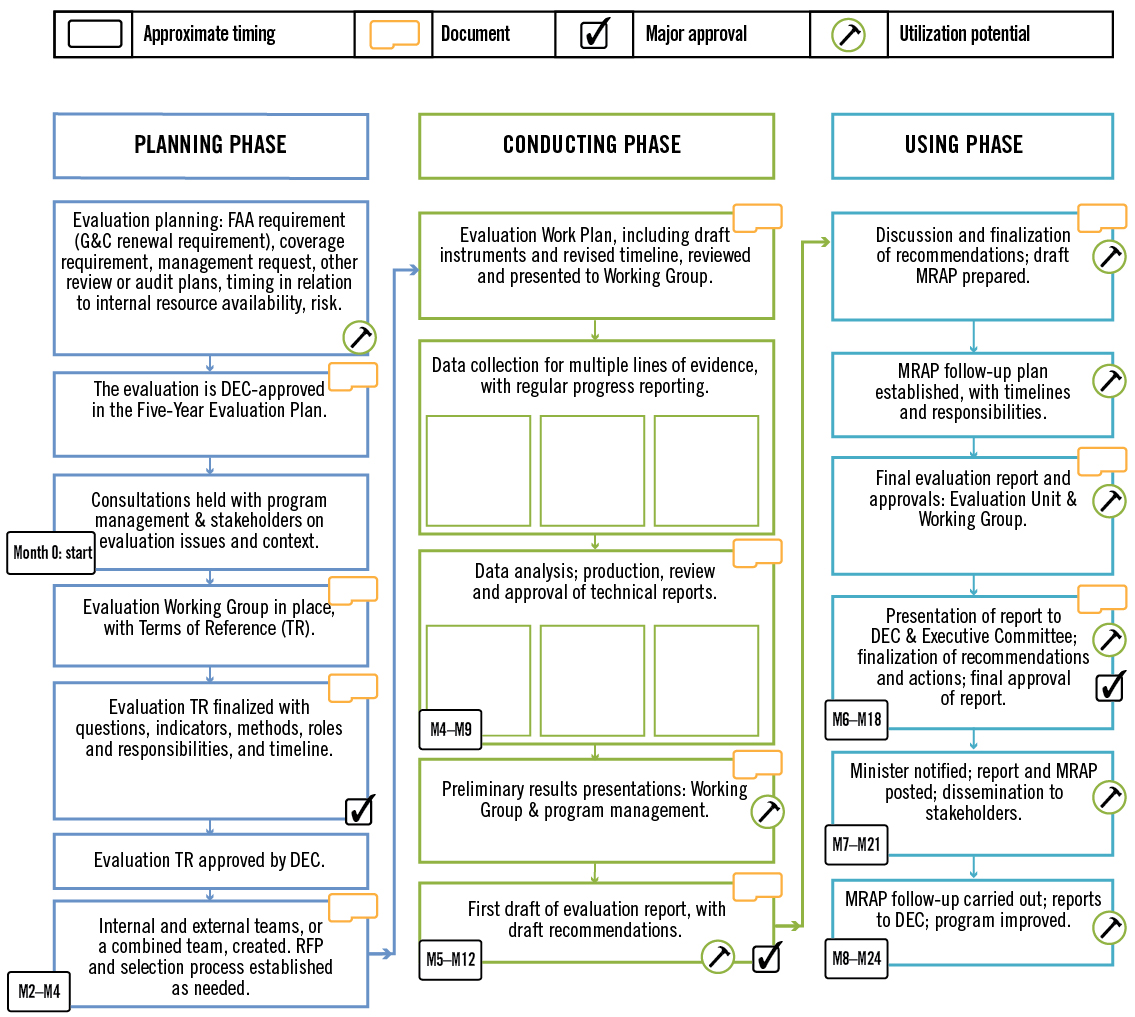

- Process mapping to give an overview of how the evaluation function operates in departments, including processes for planning, conducting and using evaluations;

- A review of internal and external documents, including the Implementation Review of the Policy on Evaluation, and a summary of consultations held in 2014 with deputy heads and other key respondents related to the five-year assessment exercises of the Policy on Evaluation and the Policy on Management, Resources and Results Structures; and

- A review of literature on the evaluation policies and practices of other jurisdictions, including the United States, the United Kingdom, Australia, Switzerland, Japan, India, South Africa, Mexico and Spain, as well as the United Nations Evaluation Group, the Development Assistance Committee of the Organisation for Economic Co-operation and Development, and the World Bank Independent Evaluation Group.

For more information on the methods used for the evaluation, see Appendix C.

2.3 Governance

The evaluation was governed by two advisory committees: one composed of heads of evaluation, and the other composed of central agency representatives. Each committee's work was governed by terms of reference. Each committee provided comments and feedback on the overall evaluation plan, including evaluation questions and case study categories, the evaluation work plan for the external evaluation team, the preliminary findings of both the policy performance and policy application case studies, the draft overall findings for the final evaluation report, and the final evaluation report.

For more information on the governance committees for this evaluation, see Appendix C.

2.4 Evaluation Period and Questions

The evaluation of the 2009 Policy on Evaluation covered the period since the policy was introduced on , to .

The evaluation questions were the following:

- Under what circumstances or conditions, if any, is it appropriate to not address all five core issues in an evaluation? What impacts would this have on the use and utility of evaluations for different users (including those in line departments and central agencies) and the objective of the policy?

- Under what circumstances and conditions, if any, is the five-year requirement for evaluation not appropriate? What impacts, if any, would changes to the five-year requirement have on the use and utility of evaluations for different users (including those in line departments and in central agencies) and the objective of the policy?

- Is the comprehensive coverage approach the most appropriate model for ensuring that evaluation supports policy and program improvement, expenditure (direct program spending) management, Cabinet decision making, and public reporting?

- To what extent are the current approaches to measuring policy performance appropriate, valid and reliable?

- What are the baseline results for measures of policy outcomes specific to the use of evaluations to support:

- Policy and program development and improvement?

- Expenditure (direct program spending) management?

- Cabinet decision making?

- Accountability and public reporting?

- Meeting the needs of deputy heads and other users of evaluation?

- Are evaluations leading to improved expenditure (direct program spending) management decision making, effectiveness, efficiency or savings for programs and policies?

- To what extent can outcome achievement be maintained given current capacity and resources?

- What are the major internal and external factors influencing the achievement (or non achievement) of intended outcomes?

2.5 Limitations

The Centre of Excellence for Evaluation was both the manager of the entity under evaluation (the Policy on Evaluation) and a part of the evaluation team. To mitigate any concerns about the centre's objectivity in conducting the evaluation, the advisory committees reviewed evaluation plans and draft deliverables; the external team played a challenge role related to the work of the internal team; a quality assurance process was established for technical and final reports, for which the external and internal evaluation teams were both responsible; and a contribution theory was used for the Policy on Evaluation (see Appendix D) to analyze potential alternative explanations for observed policy outcomes.

For the case studies, departments self-identified evaluation examples, leading to a possibility of selection and response bias in the information provided about the examples. To validate the self-reported information, a review and analysis of documents and supporting literature was conducted by the evaluation team. In addition, consultations were held with central agency representatives and follow-up consultations were held with departmental representatives from both the evaluation unit and program areas.

In most cases, central agency representatives were not able to comment on specific cases (program examples or case study categories), as staff turnover had occurred since the completion of evaluations. Whenever possible, evidence related to the specific cases was gathered; otherwise, general perceptions and observations were explored on the applicability and utility of the policy requirements and on alternative approaches. In some cases, departments had also experienced turnover or did not respond to requests for consultations.

A potential limitation for the performance case studies was that for recent evaluations conducted according to the requirements of the 2009 policy, not enough time would have passed for those evaluations to be fully used. To mitigate this limitation, evaluations that were completed before 2013 were included among the selected cases.

3.0 Findings

3.1 Performance of the Policy and Status of Policy Outcomes

3.1.1 Baseline Results for Policy Outcomes (evaluation questions 5 and 6)

1. Finding: In general, the evaluation needs of deputy heads and senior managers were well served under the 2009 Policy on Evaluation. Senior management was able to draw strategic insights to support higher-level decision making. At the same time, efforts to meet the policy's coverage requirements sometimes made evaluation units less able to respond to senior management's emerging needs.

Deputy heads who were consulted indicated that under the 2009 policy, their departments produced a good base of evaluations and had the capacity to use them. The performance case studies showed that evaluations met a range of deputy head needs, such as:

- Providing evidence of program effectiveness to support renewal decisions;

- Showing where program outcomes were not likely to be achieved; and

- Revealing related findings across a set of evaluations to support strategic decision making—for example, to identify an area of generalized concern.

The performance case studies showed that evaluations supported strategic decision making by delivering a more comprehensive perspective on the performance of departmental programming than that produced under the 2001 Evaluation Policy. The trend toward evaluating clusters of programs or larger entities, along with the convergence of all evaluations at departmental evaluation committees (or executive committees), enabled senior managers to recognize patterns across multiple evaluations and programs. Consultation evidence showed that some departments produced cross-cutting analyses from multiple evaluations of programs targeting common outcomes. In one case study, the insights drawn from across several evaluations led one deputy head to request a special review of a type of funding arrangement; in another case study, such insights influenced resource reallocation among a set of high-priority, horizontal activities. Senior executives on departmental evaluation committees also applied evaluation lessons from another branch to programs in their own branch.

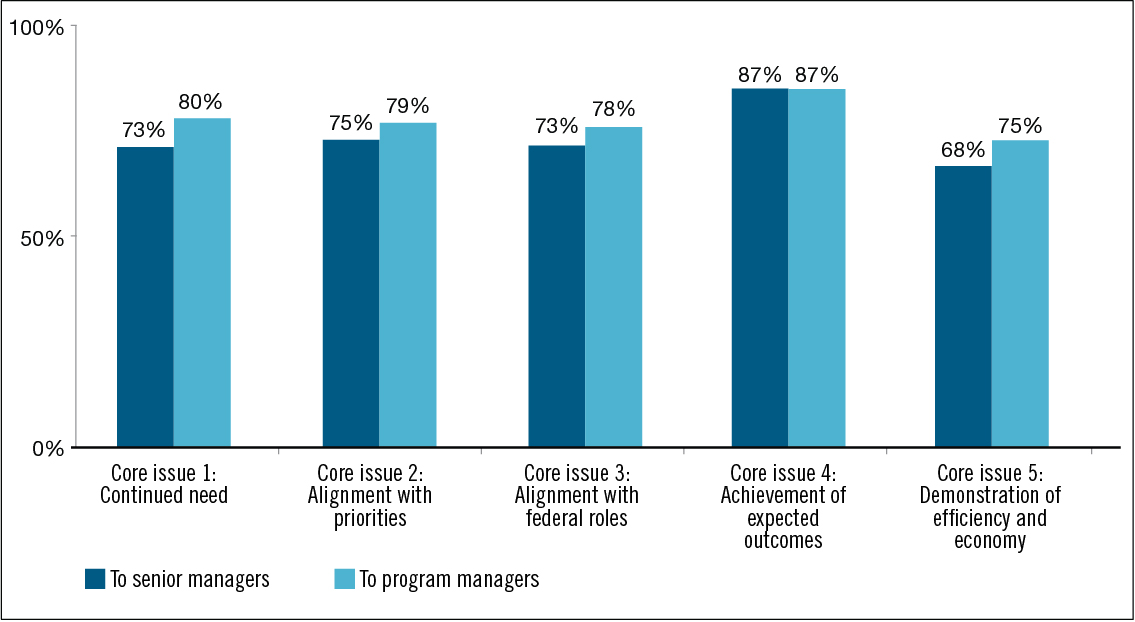

Survey evidence showed that program managers felt that senior managers were well served under the 2009 policy. Three quarters of program managers surveyed (75%) reported that it was somewhat useful (38%) or very useful (37%)Footnote 17 for senior management (deputy ministers, associate deputy ministers and assistant deputy ministers) to have evaluations of their programs every five years, as required by the policy. Further, a majority of program managers (ranging from 68% to 87%) felt that each of the five required core issues was somewhat useful or very useful to senior management.

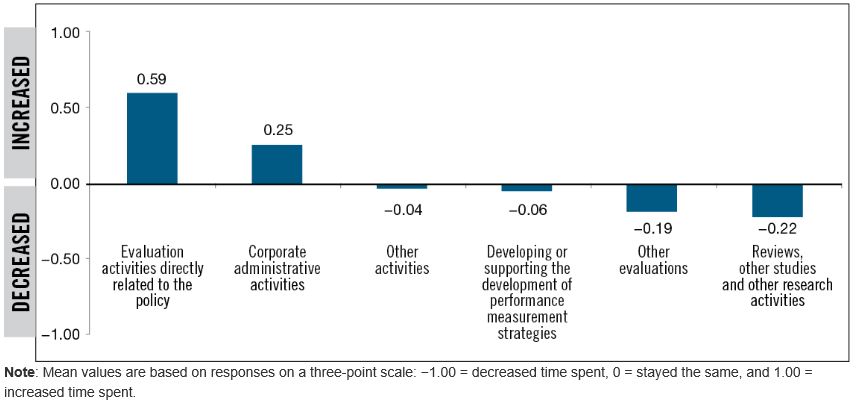

At the same time, the performance case studies showed that in some departments efforts to meet the policy's coverage requirements made evaluation units less able to respond to senior management's needs for special studies, reviews or specific evaluations on emerging issues. As shown in Figure 2, most evaluators surveyed reported that the proportion of time spent on evaluation activities directly related to the policy increased after the introduction of the 2009 policy, while the proportion of time spent on other evaluations, reviews, studies or research activities decreased.

Figure 2 - Text version

The figure shows the change in the proportion of time that evaluators, on average, have spent on various activities since the introduction of the 2009 Policy on Evaluation. The mean values for each of six activities are plotted on a vertical scale. The values from zero to one represent activities where an increased proportion of time was spent, and the values from zero to negative one represent activities where a decreased proportion of time was spent. The mean values are based on evaluators' survey responses, using a three-point scale where −1.00 means a decrease in the proportion of time spent, zero means the proportion of time spent stayed the same, and +1.00 means an increase in the proportion of time spent.

Two activities had mean values showing an increase, on average across evaluators, in the proportion of time spent on evaluation activities directly related to the policy (mean value of 0.59) and on corporate administrative activities (mean value of 0.25). Two activities had mean values that showed only a slight decrease, on average across evaluators, in the proportion of time spent on other activities (mean value of −0.04) and on developing or supporting the development of performance measurement strategies (mean value of −0.06). The remaining two activities had mean values that showed a decrease, on average across evaluators, in the proportion of time spent on other evaluations (mean value of −0.19) and on reviews, other studies, and other research activities (mean value of −0.22). The sample size (number of evaluators reporting) ranged from 41 to 82 depending on the activity.

2. Finding: The policy had an overall positive influence on meeting program managers' needs, and first-time evaluations of some programs were useful. However, program managers whose programs were evaluated as part of a broad program cluster or high-level Program Alignment Architecture entity sometimes found that their needs were not met as well as before 2009, when the program was evaluated on its own.

Program managers surveyed felt that evaluations were useful for a variety of purposes. In particular, 81% of program managers rated evaluations as somewhat useful (25%) or very useful (56%) for supporting program improvement, and 79% of program managers rated evaluations as somewhat useful (33%) or very useful (46%) for program and policy development. Performance case studies showed that some managers of programs that were evaluated for the first time gained insights that led to improvements. Further, evidence from case studies suggested that these programs may never have been evaluated were it not for the policy's comprehensive coverage requirement.

At the same time, other evidence from the Implementation Review showed that program managers did not always find their programs reflected in the findings of evaluations whose scopes aligned with Program Alignment Architecture entities (a common scope for evaluations)Footnote 18 or encompassed clusters of programs. In these cases, the evaluations did not equip them with sufficiently detailed information to make program improvements. Performance case studies illustrated that some departments addressed this issue by designing these evaluations to produce findings and conclusions at multiple levels.

In terms of assisting program managers as they developed performance measurement strategies, the policy's influence was mixed. Based on the findings from the Implementation Review, the demands of the policy's comprehensive coverage and frequency requirements may have made some evaluation units too busy to support program managers in developing their strategies to the same extent that they once had.Footnote 19 However, some evaluation units emphasized their support to program managers in this regard, to ensure that performance measurement would support future evaluations. The survey of evaluators showed that following the introduction of the 2009 policy, a slightly larger proportion (38%) of evaluators decreased the time spent supporting the development of performance measurement strategies compared with the proportion (32%) that increased the time spent. The balance of evaluators indicated that the time spent stayed the same. Despite some evaluators spending less time supporting the development of performance measurement strategies, however, 90% of program managers surveyed in 2014 indicated that their programs had a performance measurement strategy in place. Among those programs with a performance measurement strategy in place, 93% of program managers had consulted their departmental evaluation function during its development.

3. Finding: Central agencies found that evaluations were increasingly available, and they and departments increasingly used evaluations to inform expenditure management activities such as spending proposals (in particular program renewals) and spending reviews. At the same time, evaluations often did not meet central agencies' needs for information on program efficiency and economy.

Central agency analysts generally viewed evaluations as a key source of program information and often consulted them first in their analysis of spending proposals.Footnote 20 The performance case studies and stakeholder consultations showed that Secretariat analysts generally encouraged departmental use of evaluation findings in Treasury Board submissions, consistently required evaluation information for funding renewals in particular, and had recommended that departments not seek funding approval without a recent evaluation. Secretariat analysts reported that before the 2009 policy, evaluations were not always available to support submissions, but that today, if draft submissions do not provide evaluation information, they often seek such information from departments. In addition, when evaluation findings are negative, analysts seek departments' confirmation of corrective actions.

Several lines of evidenceFootnote 21 indicated that evaluations were more widely used as a source of supporting information for Treasury Board submissions and, to a lesser extent, for Memoranda to Cabinet. Through the Capacity Assessment Survey, 96% of large organizations reported in 2012–13 that they used all or almost all relevant evaluations to inform Treasury Board submissions, and 78% reported that they used all or almost all relevant evaluations to inform Memoranda to Cabinet. These findings compare with those of the survey in 2008–09, prior to the 2009 policy, where 74% of large organizations reported that they almost alwaysFootnote 22 considered evaluation results in Treasury Board submissions and 51% reported that they almost always considered them in Memoranda to Cabinet. Most large organizations established a formal process to include evaluation information in submissions (79%) and Memoranda to Cabinet (65%) in 2013–14. Evaluations were commonly used to support renewals of existing spending—notably, for ongoing programs of grants and contributions.Footnote 23 Central agency analysts typically used evaluation information to inform their advice to Treasury Board ministers, and some noted that they periodically received questions from Cabinet about evaluation results.

Based on performance and application case studies and on stakeholder consultations, the use and utility of evaluations, in particular at central agencies, was affected by how well evaluation timing aligned with the timing of spending decisions. Central agencies sometimes noted that evaluations arrived too late to meaningfully inform renewal decisions. For example, it was noted that key discussions on renewal are often held a year or more before a Treasury Board submission is developed. In those cases, an evaluation that is finished only in time to be appended to the submission can be seen as too late to support central agency analysts. It should also be noted, however, that within departments the draft evaluation reports are often available to program managers much earlier, allowing them to take advantage of the findings and knowledge generated, even if the report has not been fully approved.

Based on the Implementation Review and on case study consultations with central agency representatives, evaluation utility was also affected by how well the evaluation scope matched the unit of expenditure that was subject to a decision. When analyzing and advising on Memoranda to Cabinet or Treasury Board submissions, central agencies' information needs tended to be project-specific or program-specific—that is, specific to the unit of funding being renewed. When evaluations had a broad scope, such as a Program Alignment Architecture Program, they may not have provided sufficiently granular information. Case studies and stakeholder consultations showed that evaluations often did not meet central agencies' needs for information on program efficiency and economy—for example, because evaluators' analysis of program cost-effectiveness was limited by the incompatible structure of financial information. Central agencies also wanted better evidence on program alternatives in government-wide and cross-jurisdictional comparisons. A key risk associated with evaluations not meeting the needs of central agencies for this information is that their analysis and advice to ministers regarding departmental proposals may not be as well supported by neutral evidence as they could be.

Medium to high useFootnote 24 of evaluations in spending reviews (for example, strategic reviews) was enabled by the increased availability and relevance of evaluations,Footnote 25 and most departmental evaluation committee members and senior managers who were consulted, including deputy heads consulted in 2014, reported a high degree of evaluation utility for this purpose. Almost two thirds of evaluators surveyed reported positive impacts on the utility of evaluations for spending reviews because of the policy's comprehensive coverage requirement (63%) and core issues requirement (62%). Deputy heads consulted by the Treasury Board of Canada Secretariat in 2010 reported that strategic reviews raised the profile of the evaluation function by requiring departments to systematically address fundamental issues of program relevance. The majority of program managers surveyed (63%) reported that evaluations were somewhat useful (44%) or very useful (19%) for spending reviews. Most Secretariat program analystsFootnote 26 reported that evaluations supported their analysis during spending reviews and noted that in many departments these reviews increased the demand for evaluations and that the attention paid by senior executives helped evaluation demonstrate its value.

4. Finding: Evaluation use under the 2009 policy was extensive, but use and impact could be improved by ensuring that the evaluations undertaken, and their timing, scope and focus, closely align with the needs of users.

Before the introduction of the 2009 policy, weaknesses in evaluation use had been documented.Footnote 27 After 2009, the Secretariat's monitoring and reporting showed extensive evaluation use during the policy's transition period. In the 2012–13 Capacity Assessment Survey, large departments reported high implementation rates of management responses and action plans; of the 901 management action plan items that were scheduled for completion in 2012–13, 53% were fully implemented by the end of the fiscal year and 21% were partially implemented. In addition, Management Accountability Framework assessment ratings documented extensive evaluation use; more than 96% of large departments were rated acceptable or strong for evaluation use in 2013–14, compared with 77% of large departments in 2007–08 and 78% of large departments in 2008–09.

When consulted in fall 2010, many deputy headsFootnote 28 stated that evaluation was making a solid contribution to decision making, but a number of deputy heads felt that more could be achieved. When consulted in 2014, deputy heads acknowledged the usefulness of evaluations for program and policy improvement and development, strategic reviews and as a means of capturing corporate memory, while also noting that sometimes there were issues with the timing, focus and scale (level of intensity) of evaluations.

In case studies, evaluations were seen as most useful when they were timely, provided new information, and did not merely re-identify problems in program delivery that users already knew about. Evaluations were seen as less useful when they could not lead to organizational learning, when there was no decision to inform, or when no action could be taken. Central agencies as well as program managers, heads of evaluation, and evaluators noted situations where evaluations were less useful, including when their timing, scope, focus, report length and level of analytical rigour did not align with decision makers' needs or interests. Key risks associated with producing evaluations of low utility include spending evaluation resources inefficiently, rather than allocating the resources to evaluations that would be more useful and, more broadly, undermining the perceived value of the evaluation function as a whole.

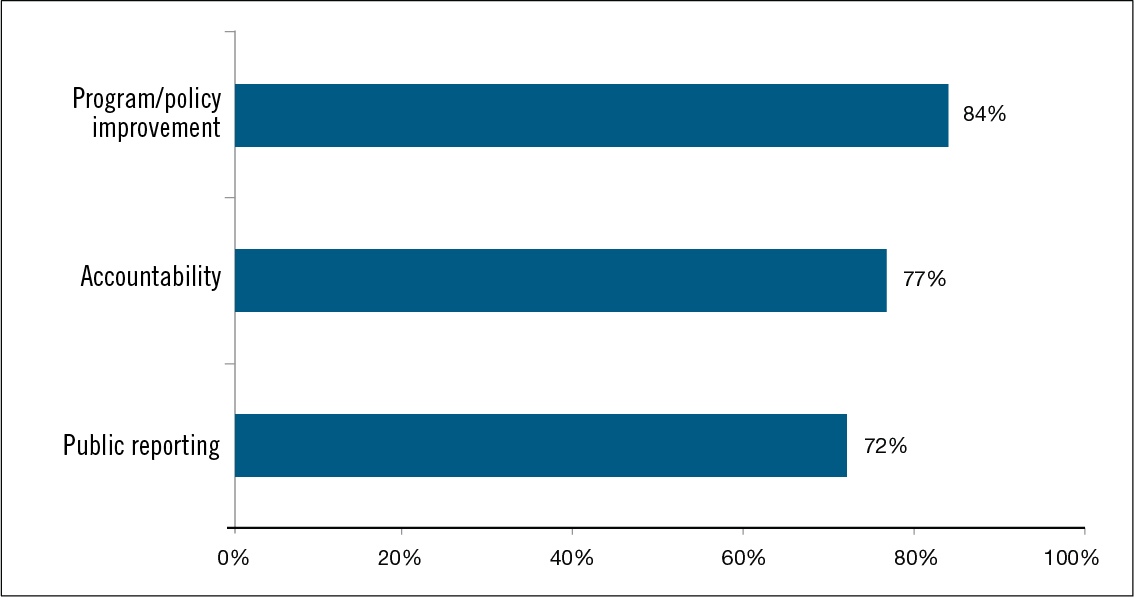

5. Finding: The main use of evaluations was to support policy and program improvement.

Analysis by the Centre of Excellence for Evaluation showed that 75% of evaluation reportsFootnote 29 completed in 2010–11 included recommendations to improve program processes. Similarly, across the evaluations examined in the performance case studies, most recommendations pertained to program improvement, and all were actually used for this purpose. Performance case studies also showed that almost all of the evaluations examined were used for program improvement and that some evaluations resulted in improvements to a larger suite of programs than the one evaluated.Footnote 30 However, performance case studies also showed that some evaluations were not used for improvement purposes when internal decisions left no opportunity for recommendations to be implemented—for example, when the program was eliminated or completely reorganized. The evidence showed that in the course of examining the relevance and performance of programs, evaluations sometimes contributed to operational efficiencies, but that these efficiencies were rarely in the form of direct cost savings.

Among a list of possible evaluation uses, program managers rated program and policy improvement as the one for which evaluations had been the most useful; 81% of program managers rated evaluations as somewhat useful (25%) or very useful (56%) for this purpose. Overall, both evaluators and program managers reported that the policy had a positive or neutral impact on the utility of evaluations for informing program and policy improvement; 56% of evaluators and 35% of program managers reported that evaluation utility increased, whereas only a small proportion (9% and 5% respectively) reported that utility had decreased. The balance (35% of evaluators and 60% of program managers) stated that utility had remained the same.

The evidence showed that the use of evaluation for accountability and public reporting increased, and program managers and evaluators reported that the policy had a positive impact on the utility of evaluations for these purposes.Footnote 31 The 2000 December Report of the Auditor General of Canada noted that performance reports to Parliament made too little use of evaluation findings; by 2011, the annual Capacity Assessment Survey showed that a high proportion of large organizations (89%) considered 80% or more of their evaluations when preparing their annual Departmental Performance Reports. In 2013–14, the annual Capacity Assessment Survey showed that 91% of large organizations had formal processes to ensure that evaluation inputs were considered in parliamentary reporting.

Performance case studies showed that organizations usually posted evaluation reports, including management responses and action plans, on their websites, although in some cases posting occurred long after the evaluation was completed. In the performance case studies, a small number of stakeholders suggested that part of the lag between functional completion of evaluation work and the approval and posting of reports was due to internal discussion when preparing reports for public posting, which led in some cases to less critical reporting.

6. Finding: The increased use of evaluations to support decision making was enabled by an observed government-wide culture shift toward valuing and using evaluations.

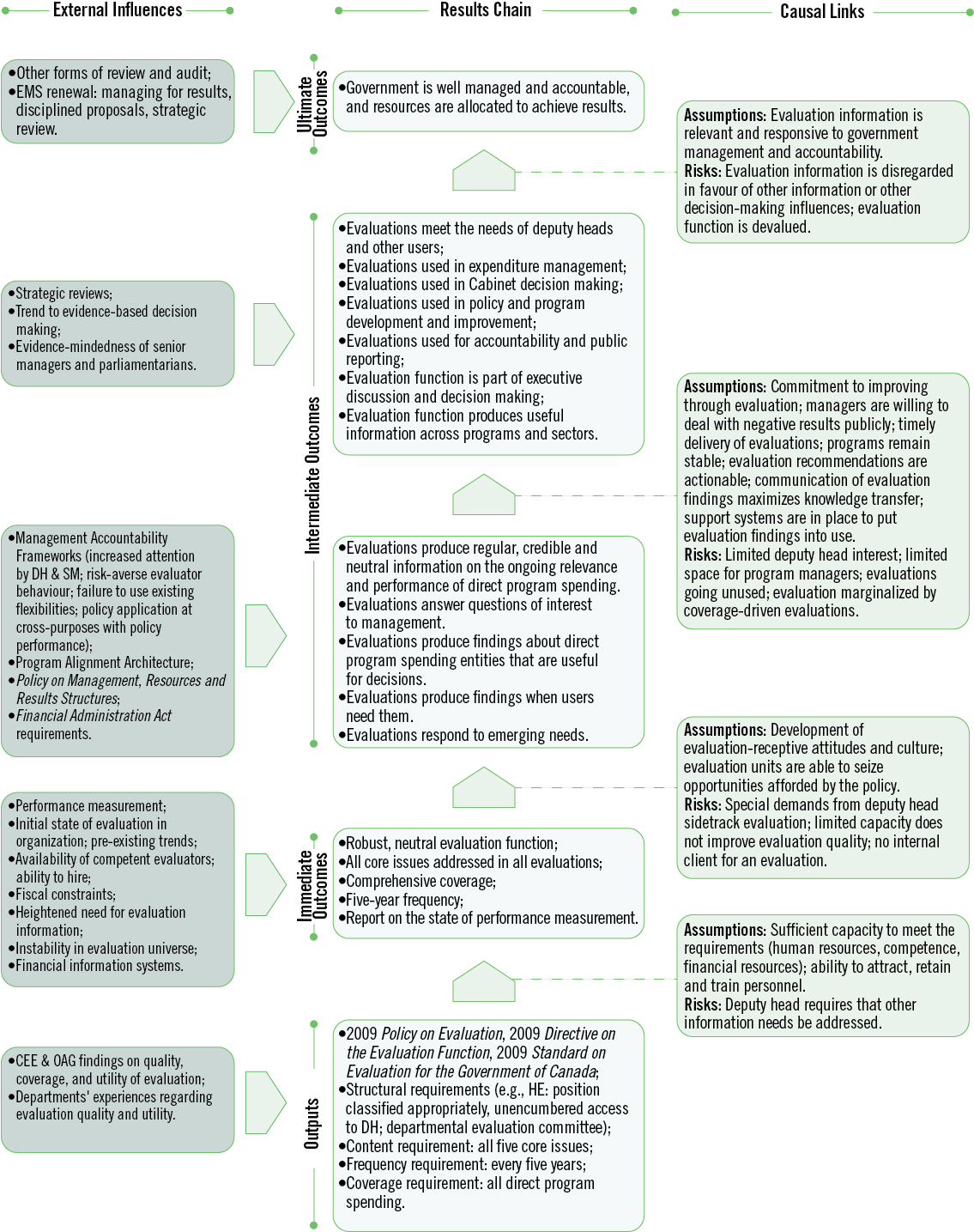

Key conditions had to be established for the policy to achieve its intended outcomes for evaluation use.Footnote 32 According to the theory of change developed for the Policy on Evaluation (see Appendix D), the policy was intended to drive a cultural shift in departments to increase the perceived value of, confidence in, and use of evaluation. This culture shift was evidenced by:

- The shift in stakeholderFootnote 33 perceptions, from viewing evaluations as an oversight burden for programs, to perceiving their value and the skills available in the evaluation unit;Footnote 34

- Increased departmental dialogue about evaluation since 2009, as reported by 71% of surveyed evaluators and 46% of surveyed program managers;Footnote 35 and

- High rates of implementing recommendations, encouraged by the establishment of systems for tracking the implementation of evaluation recommendations, which 97% of all large departments reported having in place by 2013–14.Footnote 36

Deputy heads' more general interest in evaluation likely contributed strongly to the observed outcomes.Footnote 37 Management Accountability Framework assessment ratings of departmental evaluation functions ensured management attention and were partially responsible for bringing a higher profile to evaluation. An analysis of Management Accountability Framework assessment ratings from 2006–07 to 2011–12 showed an increasing trend in evaluation use, as well as coverage, governance and support, and quality of evaluation reports.

3.1.2 Factors Influencing Outcome Achievement (evaluation question 8)

7. Finding: The factors that had the most evident positive influence on evaluation use in departments were policy elements related to governance and leadership of the evaluation function, whereas the factors that most evidently hindered evaluation use were those related to resources and timelines.

Across all lines of evidence, the engagement of senior leaders in departmental evaluation functions appeared to have the clearest positive influence on evaluation use. This influence was attributed, at least in part, to policy requirements related to governance and leadership (for example, the defined roles and responsibilities of deputy heads, departmental evaluation committees and heads of evaluation, and the head of evaluation's unencumbered access to the deputy head), and to a government-wide climate that emphasized results-based management and evidence-informed decision making. Increased senior management engagement led to greater implementation of action plans and enhanced the overall visibility of the evaluation function. The presence of deputy heads on most departmental evaluation committees ensured that evaluation findings were taken seriously, and scrutiny from a more senior executive level may have increased evaluation quality.

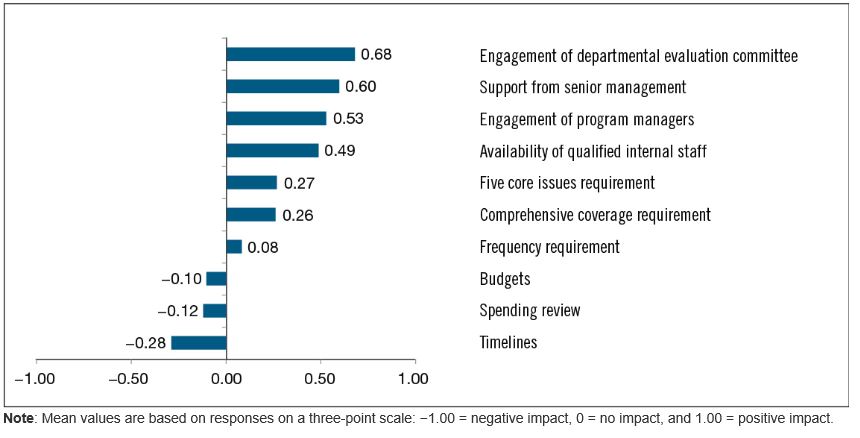

As shown in Figure 3, evaluatorsFootnote 38 reported that the engagement of departmental evaluation committees, senior management and program managers, as well as the availability of qualified internal evaluation staff, had positive influences on achieving policy outcomes. These factors were more often reported by evaluators to have had a positive impact on use and utility than the policy requirements for comprehensive coverage of direct program spending, five-year frequency of evaluations, and examination of the five core issues.

Evaluators surveyed reported that the most evident negative influences on evaluation utility came from the timelines for evaluation projects, from the budgets for evaluation projects, and from spending reviews. Although the Treasury Board of Canada Secretariat did not track changes in the budgets of individual evaluation projects, government-wide financial resources for evaluation were 8% lower in 2012–13 than in 2008–09, before the policy's introduction, despite an initial increase in the first year of implementing the 2009 policy. The performance case studies provided further insights on the influence that spending reviews sometimes had as an external factor on evaluation utility: in some cases, evaluations could not be used for program improvement purposes because the program spending was significantly changed or discontinued. Program managers and senior managers also noted that the contribution of evaluations to decision making could be affected by the availability of other forms of oversight and review, such as spending reviews, especially when some of the input information was common.

(N = 98 to 141)

Figure 3 - Text version

The figure shows the extent to which evaluators, on average, felt that various factors had positive or negative impacts on the use of evaluations. The mean values are based on evaluators' survey responses using a three-point scale, where −1.00 means the factor had a negative impact on evaluation use, zero means the factor had no impact on evaluation use, and +1.00 means the factor had a positive impact on evaluation use.

Seven factors had mean values indicating positive impacts on evaluation use: (1) engagement of departmental evaluation committee (mean value of 0.68), (2) support from senior management (mean value of 0.60), (3) engagement of program managers (mean value of 0.53), (4) availability of qualified internal staff (mean value of 0.49), (5) five core issues requirement (mean value of 0.27), (6) comprehensive coverage requirement (mean value of 0.26), and (7) frequency requirement (mean value of 0.08). Three factors had mean values indicating negative impacts on evaluation use: (1) budgets (mean value of −0.10), (2) spending review (mean value of −0.12), and (3) timelines (mean value of −0.28). The sample size (number of evaluators reporting) ranged from 98 to 141 depending on the factor.

Management Accountability Framework assessments and ratings had a significant influence on policy implementation and results. On the positive side, they drew senior management's attention to evaluation and helped raise the profile of the function. On the negative side, they promoted risk-averse behaviour that may have limited departments' use of the policy's flexibilities. As noted in the Implementation Review, although flexibilities existed to calibrate evaluation effort when addressing core issues, they were not fully exploited owing to concerns that Management Accountability Framework assessment ratings would be adversely affected. This finding was corroborated by the performance case studies and stakeholder consultations, including consultations with deputy heads, who noted that while further policy flexibilities may be needed, existing flexibilities had not been fully exploited.

Another factor that influenced the policy's impact on the conduct and use of evaluations was the amount of grants and contributions spending administered by individual departments. In departments where the amount was large, the impact of the policy was small because of the pre-existing Financial Administration Act requirement (section 42.1) for comprehensive five-year coverage of this spending. Stakeholders noted that organizations with a large amount of grants and contributions spending had evaluation functions that were well established and producing useful evaluations before 2009.

3.1.3 Sustainability of Outcomes (evaluation question 7)

8. Finding: Despite concerns about their capacity to meet all policy requirements, departments generally planned and expected to meet all requirements in the current five-year period.

A comparison of Capacity Assessment Survey data collected before 2009 and in 2012–13 showed that on average, large organizations increased the human resources they devoted to their evaluation functions by 10% but decreased financial resources by 8%. As mentioned earlier, to expand evaluation coverage with these resources, departments used various strategies. EvaluatorsFootnote 39 reported that the most effective strategies were calibrating evaluation scope and approach according to program risks, aligning an evaluation's scope with Program Alignment Architecture units, clustering related programs, and increasing the use of internal staff to conduct evaluations.Footnote 40 The trend toward conducting evaluations with broad scopes, however, affected the utility of evaluations; for example, some program managers found that the information available to inform program improvements was less detailed.

When consulted for the Implementation Review, heads of evaluation highlighted resources as the main factor constraining them in meeting the coverage requirements, and they were concerned about their ability to meet the requirements in a meaningful manner with the available resources. Although heads of evaluation and other stakeholdersFootnote 41 expressed concerns about the function's capacity to achieve and maintain comprehensive coverage over five years, in most cases it appeared that departments could manage their capacity to meet the requirements. Three quarters of evaluatorsFootnote 42 (74%) reported that the utility of the evaluation function could be maintained with current resources, and in a subsequent question, more than one third (36%) stated that utility could be increased.

Despite the potential for achieving full evaluation coverage within current capacity, several lines of evidenceFootnote 43 showed that greater flexibility is needed in applying policy requirements related to coverage, timing, scope and focus for evaluations to be more responsive to the information needs of various users. Flexibility is further discussed in subsequent sections of this report.

3.2 Application of the Three Major Policy Requirements

9. Finding: Challenges in implementing comprehensive coverage stemmed from the combined demands of the three key policy requirements (comprehensive coverage of direct program spending, five-year frequency for evaluations, and examination of the five core issues), along with the context of limited resources for conducting evaluations. The five-year frequency requirement appeared to be central to the implementation challenges in most departments.

Although the relevance and impact of the three major policy requirements are discussed separately in the subsections below, this evaluation found that there was a clear interaction among the requirements. For example, challenges associated with comprehensive coverage were often related to the five-year time frame for completing comprehensive coverage or to the requirement for addressing all five core issues, rather than to the comprehensive coverage requirement on its own. Arguably, key challenges associated with the comprehensive coverage and the core issues requirements could be attributed in large measure to the five-year frequency requirement. Many stakeholders supported the periodic evaluation of all programs, but not the inflexibility of a five-year frequency when it did not meet their information needs. Others supported the principle of addressing core issues but questioned the need to address all of them every five years.

Most lines of evidence,Footnote 44 including stakeholder consultations, documented doubts about departments' capacity to achieve comprehensive coverage over five years. Some stakeholders felt the requirements were too demanding given current resources; others identified resources as the key constraint to meeting coverage requirements and producing meaningful evaluations. In this context, the existing trend toward evaluating larger program entitiesFootnote 45 was reinforced by the comprehensive coverage and five-year frequency requirements, which led departments to increasingly opt for evaluating programs in clusters or as Program Alignment Architecture units. Deputy heads consulted in 2014 stated that the comprehensive coverage requirement encouraged departments to evaluate larger units of programming, and case study evidence showed that departments commonly used this strategy to expand evaluation coverage. Further, a sample of departmental evaluation plans analyzed by the Centre of Excellence for Evaluation in 2011 showed that the scope of two thirds of evaluations aligned with Program Alignment Architecture Programs or Sub-Programs.

Case studies showed that to meet the coverage requirements, departments sometimes diverted evaluation resources from higher-priority work or emerging needs to evaluations of low-risk, small or unimportant programs. Deputy heads consulted in 2014 indicated that requiring comprehensive coverage to be achieved over a five-year period limited the flexibility of departments to target evaluations on new or emerging priorities. The policy's five-year frequency requirement, coupled with the Financial Administration Act's requirement for five-year coverage of all ongoing programs of grants and contributions, meant that in some organizations and in some years, the timing for completing evaluations had been inflexible for a high proportion of them. For example, one head of evaluation suggested that up to 80% of the unit's evaluation plan was fixed as a result of the requirements of the policy and the Financial Administration Act. It was noted in the consultations that little differentiation was made between the five-year requirement of the Financial Administration Act (section 42.1) that pertained specifically to ongoing programs of grants and contributions, and the coverage requirements of the Policy on Evaluation. This finding suggests that deputy heads' observations on the challenges and inflexibilities of the policy's five-year comprehensive coverage requirement may also apply to the legal requirement.Footnote 46

A further combined challenge of the five-year frequency and comprehensive coverage requirements was that some evaluations had to be conducted when a program was immature or when its performance measurement data were insufficient, which made these evaluations less useful and more difficult to conduct.

Consultations and other evidence showed that despite the challenges of the coverage requirements, departments did not try to avoid conducting evaluations. However, they perceived a need for the Treasury Board of Canada Secretariat to allow departments to apply the three major policy requirements more flexibly, to ensure the value, utility, efficiency and cost-effectiveness of evaluation. A prevalent view among stakeholders was that for core issues, frequency and coverage, evaluations under the 2009 policy were intended to satisfy central agencies' information needs as much as or more than the needs of senior management in departments.

3.2.1 Comprehensive Coverage (evaluation question 3)

10. Finding: Stakeholders at all levels recognized the benefits of comprehensive coverage for encompassing the needs of all evaluation users and for serving all purposes targeted by the policy. Nevertheless, there were clear situations where individual evaluations had low utility.

A literature review showed that sixFootnote 47 of nine countries, as well as the Development Assistance Committee of the Organisation for Economic Co-operation and Development, recommended comprehensive evaluation coverage. Alternative approaches used in other jurisdictions involved targeting evaluation coverage by considering a variety of factors such as decision-making needs, priorities, program maturity, program type, self-assessment results and important government-wide themes.

Deputy heads who were consulted in 2014 held mixed opinions about the appropriateness of the comprehensive coverage requirement; many were in favour, some emphatically, whereas a smaller number favoured a more risk-based model. However, those who favoured the comprehensive coverage model often stated that the five-year period for achieving comprehensive coverage posed challenges. The reasons that deputy heads supported comprehensive coverage included the following:

- It makes sense to evaluate all programming.

- It ensures disciplined oversight, ensures accountability and keeps issues from being “swept under the carpet.”

- It leads to evaluation scopes that are often at a higher level and that support decision making on important “units of account.”

Central agency respondents generally favoured comprehensive coverage because it demonstrates good governance to scrutinize government's use of all taxpayers' contributions. Case studies showed that without the comprehensive coverage requirement some low-priority or low-risk programming would have been excluded from evaluation. However, central agency respondents felt it appropriate to periodically evaluate both low-risk and long-horizon programs, as these evaluations could be important in formulating advice to Treasury Board ministers on program renewals and for ensuring public accountability. The performance case studies demonstrated that there was value in evaluating some low-risk programs. At the same time, central agency respondents also recognized that evaluating certain programs was impractical. When central agencies had concerns about the comprehensive coverage requirement, the concerns often related to conducting evaluations of low utility—for example, evaluations that could not lead to actionable recommendations.

Heads of evaluation who were consulted generally agreed on several benefits that they observed from the comprehensive coverage requirement since 2009. In particular, they reported that it made evaluations available to inform decision making (notably, for programs where no past evaluations existed) and to inform processes such as departmental performance reporting and adjustments to Program Alignment Architectures. They also reported that comprehensive coverage increased the profile of the function, validating and empowering the function within departments, while increasing its workload and sometimes its resources.Footnote 48

The views of other stakeholder groups, notably program managers and evaluators,Footnote 49 were more divided. Among those stakeholders that supported comprehensive coverage, there was a general view that in principle, all spending should be evaluated periodically. These stakeholders reported that benefits from the comprehensive coverage requirement included insights on programs never-before or long-ago evaluated, and a strategic view of performance that cut across related departmental programs—for example, to identify redundancies and synergies. Evaluators, in particular, generally reported that comprehensive coverage had increased the utility of evaluations for all major uses targeted by the policy.Footnote 50 In addition, case studies showed that the requirement had a profound effect in some departments, especially those with little grants and contributions spending, because many evaluations were conducted on entities that had never been evaluated before 2009. These evaluations sometimes produced valuable findings that led to program improvements. In some cases, however, stakeholders indicated that if an evaluation had not been required, the organization would likely have conducted a different type of study to address its needs.

Across all stakeholder groups, those that did not support comprehensive coverage generally questioned using resources to evaluate programs where there was little perceived need for the information (for example, for low-risk programs, or where other sources of information existed), where the evaluation might have no utility or where recommendations would be non-actionable. For example, the case studies showed that some stakeholders questioned the merits of applying the comprehensive coverage requirement to assessed contributions because evaluations would have no impact on what Canada is required to spend on these programs. However, case studies showed that existing evaluations of assessed contributions, which focused on the effectiveness of Canada's membership effort (for example, in deriving benefits for Canada or in influencing organizational policies) or on the coordination between the various departments and agencies engaged with the international organization) had demonstrated value. Assessed contributions are also subject to the evaluation requirements of the Financial Administration Act (section 42.1) and the Policy on Transfer Payments.