Guide to Rapid Impact Evaluation

Using this document

This guide provides an overview of the method for rapid impact evaluation (RIE) and how RIE can best be used in the Government of Canada. It focuses on the results of three pilot projects conducted in three federal departments. The pilot projects used a mixed-method approach, so they should not be taken as definitive models for the use of RIE in other contexts. Departments are welcome to adapt the RIE method to suit their particular needs and requirements.

Acknowledgements

This guide has been prepared by the Centre of Excellence for Evaluation of the Treasury Board of Canada Secretariat. It is based on pilot projects supported by Dr. Andy Rowe, who developed the RIE method in 2004 and 2005 to assess the environmental and economic effects of decisions about managing natural resources.

The Results Division thanks Dr. Rowe for his support and advice, Natural Resources Canada, the Public Health Agency of Canada and Public Safety Canada for their participation in the pilot projects. The Division also thanks the departments and agencies that reviewed and provided comments on draft versions of this document.

Summary: what is a rapid impact evaluation?

A rapid impact evaluation (RIE) provides a structured way to gather expert assessments of a program’s impact. An RIE engages a number of experts to provide a balanced perspective on the impacts of a program and ultimately increase acceptance and adoption of the RIE’s findings. Each expert assesses program outcomes relative to a counterfactual, which is an alternative program design or situation, in order to assess the program’s impact relative to alternatives. Three types of experts are consulted:

- program stakeholders who affect the program or are affected by it

- external subject matter experts

- technical advisors

Time required for an RIE

Depending on the availability of experts, two to six months are needed for an RIE. Some time for learning is also required when an organization first conducts an RIE.

Resources required for an RIE

Depending on evaluation design choices, an RIE requires only low to medium resources.

Key benefits of an RIE

An RIE:

- is relatively quick and low-cost relative to other methods of evaluation and does not require existing performance data, giving the RIE flexibility to be used during and after the program’s design

- provides a clear and quantified summary of expert assessments of a program’s impact relative to an alternative, which can help inform executives, managers and program analysts

- prioritizes the engagement of external perspectives, which can bring valuable viewpoints not always considered in evaluations of federal programs and increases the legitimacy and accuracy of an evaluation

- allows different versions of a program to be compared using the counterfactual

- supports the use of the evaluation by engaging widely during its conduct

When to use an RIE

It is recommended to use an RIE when:

- an evaluation will assess a program’s impact but cannot observe or reasonably estimate the effects of the program, an intervention to the program or its counterfactual, making other assessment methods such as experimental or quasi-experimental designs difficult

- there is a benefit to comparing the impact of two or more possible versions of a program, such as during a program’s design or its renewal

- there is a need for perspectives that are external to the program or to government, particularly those of subject matter experts

Glossary

direct outcome

An effect that derives directly from a program or intervention. Such effects are typically immediate or intermediate outcomes, depending on the intervention. “Direct outcome” is used because experts are typically better at assessing the probability and magnitude of program effects on direct outcomes rather than on indirect outcomes.

impact

A positive or negative, primary or secondary long-term effect produced by a development intervention, directly or indirectly, and intended or unintendedFootnote 1. Identifying unintended effects is a strength of RIE because it engages many perspectives.

incremental impact

The difference between what happened with an intervention and what would have happened in a different situation (the counterfactual). An incremental impact is the “extra” or incremental effect that the program has on an outcome, beyond the effect that the counterfactual would have had.

interest

A perspective or viewpoint on a problem. Interests of program stakeholders can include program beneficiaries, program managers, implementation partners, executives and others. It is valuable to have a broad definition of interests to ensure that all relevant perspectives are engaged.

outcome

An external consequence attributed, in part, to an organization, policy, program or initiative. Outcomes are within the area of the organization’s influence, not within the control of a single organization, policy, program or initiative.

overall impact

An aggregate measure of the program’s incremental impact across all outcomes, reported as a percentage. For example, subject matter experts might assess the overall impact of the program to be 15%, which means that the presence of the program, as opposed to the counterfactual, led to a 15% larger impact overall.

program stakeholder

Someone who affects or is affected by the program directly and who has deep expertise in how the program operates.

subject matter expert

An academic or other external expert who has general expertise in the field in which the program operates.

technical advisor

An internal or external expert (although external to the program) who has expertise in a specific area related to the evaluation, such as the broader context of the program or a technical or scientific field related to the program.

1.0 Introduction and overview

An RIE is a structured way to gather expert assessments of a program’s impact. RIEs involve three principal steps:

- Evaluators develop a program summary, which:

- builds consensus among stakeholder groups

- supports the identification of robust and relevant evaluation questions

- increases the legitimacy of results and the likelihood that they will be used

- Evaluators systematically engage with three groups of experts to obtain their assessments of the intervention’s impact relative to the impact of a scenario-based counterfactual

- Expert assessments are analyzed, weighted and combined to generate an estimate of the program’s overall impact

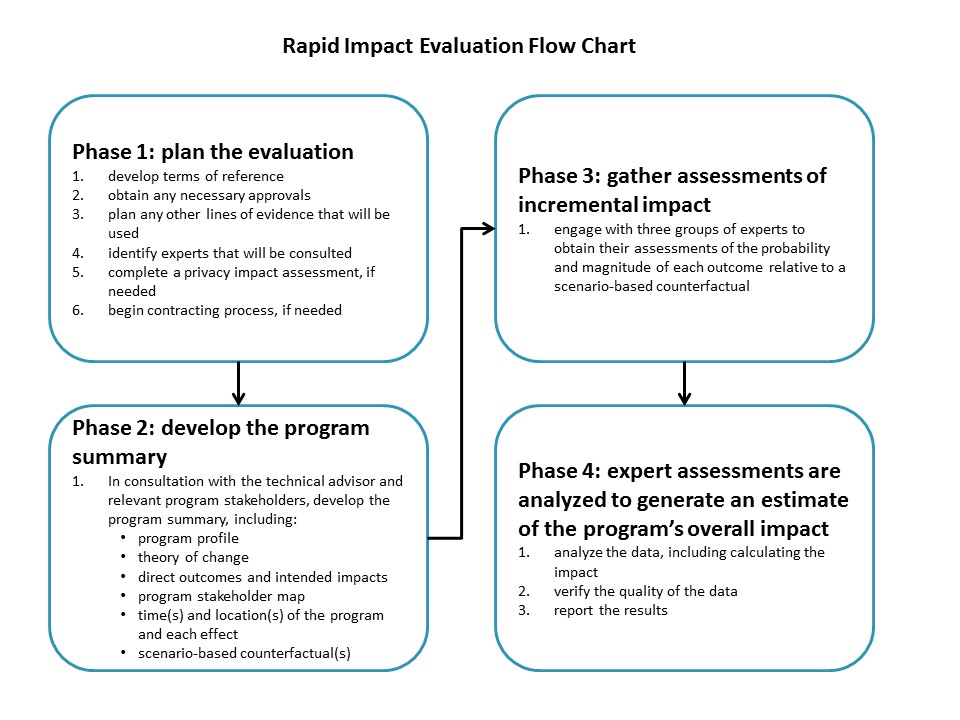

These steps, along with an initial planning stage, are captured in the RIE flow chart in the appendix.

The foundation of an RIE is the scenario-based counterfactual. In consultation with relevant groups, the evaluator develops an alternative to the intervention being evaluated. The intent is to describe what could have occurred if the program had been implemented differently. The alternative must be plausible, feasible and legal. Experts assess the outcomes of the program being evaluated and the outcomes of the counterfactual.

How reliable are RIEs?

RIEs are still new, so relatively little literature and guidance is available so far. Testing has found that RIEs have strong internal reliability, with a Cronbach’s alpha (a common measure of internal consistency) of over 0.9 (excellent). External validity has also been good when compared with technical forecasts and subsequent empirical observations. In the pilots conducted, results gained through RIE aligned with results from the rest of the evaluation and provided a complementary line of evidence. The intention is not to replace a quasi-experimental or experimental design where such designs are appropriate, but to provide an additional line of evidence to complement other evaluation methods.

Similar to a control group in an experimental design, the counterfactual makes it possible to assess the incremental impact of the program (the difference the program made) relative to what would have occurred if the program were implemented differently. Without a counterfactual, the evaluation cannot determine incremental impact because it cannot tell what would have happened if things were different.

The RIE approach was developed in 2004 and 2005 by Dr. Andy Rowe to assess the environmental and economic effects of decisions about managing natural resources. The RIE approach has since been used in southeast Asia, the US and elsewhere.

Between May 2015 and April 2016, RIE was piloted at three Government of Canada departments:

- Public Health Agency of Canada

- Public Safety Canada

- Natural Resources Canada

This guidance is a result of the lessons learned in these pilot projects.

Overall, RIE has the potential to be a fast and effective way of gathering expert assessments of a program’s impact, taking as little as a few months. However, it can also have a considerable learning curve as departments learn the RIE method and adjust to it.

2.0 When to use a rapid impact evaluation

The pilot projects used RIE in relatively simple contexts so that a compelling counterfactual could be developed and experts could assess program impacts relatively straightforwardly. If a program or counterfactual is complicated or not well understood, RIE may not be as effective in evaluating impact. That said, complex programs can be difficult to evaluate using any method, and RIE can generate estimates of fairly complicated impacts. RIE can be particularly powerful when the evaluation is to assess impact but does not have access to other methods for assessment, such as experimental, quasi-experimental or comparative case study designs (for example, for logistical, timing or ethical reasons).

RIE is also useful when evaluators are interested in external perspectives on a program’s impact, such as when evaluating a program that deals with Canadians directly or is based on a program implemented internationally. Engaging with diverse stakeholders can help ensure that the evaluation focuses on the best possible questions and deepens each evaluator’s familiarity with the program. RIE can also provide a richer picture of the program’s strengths and weaknesses, beyond what is apparent to any one group. RIE can therefore be helpful when there are conflicting assessments of the program’s impact.

Finally, RIE is perhaps most valuable when the evaluation seeks to compare multiple versions of a potential intervention, such as during its design or renewal. In such a situation, RIE could be conducted before or after the program is implemented to assess the relative merits of several possible designs, in other words:

- during program implementation, to consider whether there is a need to alter course or adjust the program

- after program implementation, to draw out lessons learned and inform subsequent iterations

RIE has an advantage over other evaluation methods in its capacity to compare multiple possible scenarios through the scenario-based counterfactual.

For many evaluations, it may make sense to adopt a mixed-method approach and use some version of RIE in combination with other evaluation methods. Such an approach occurred in the pilot projects. Various methods and techniques, based on resource constraints, timelines and the goals of the evaluation, were used to address the evaluation’s questions.

For example, an evaluator may decide to not create a program summary but instead use the structured method of conducting surveys and workshops outlined below to obtain an assessment of program impact from experts. Alternatively, an evaluator may decide that he or she has gathered sufficient evidence of impacts from their existing approach, but choose to develop the program summary in order to build consensus and increase the legitimacy of findings and the likelihood that recommendations will be adopted.

3.0 Counterfactuals

A key element of an RIE is the counterfactual. A scenario-based counterfactual is an alternative to what was done. In other words, it is an alternative design or approach to delivery that has the same goals as what is being evaluated. Experts assess the effects of the counterfactual in the same way as they assess the effects of the actual program. In this way, the evaluation can determine what would have happened if things were different and show the relative effects of several options. The choice of an appropriate counterfactual is therefore one of the most important steps in the entire evaluation. Without an appropriate counterfactual, it is not possible to assess incremental impact.

An evaluation may have one or more counterfactuals. A particular strength of RIE is that it allows a “with” and “with different” design by using scenario-based counterfactuals. In government, it is rare that nothing is done if a program is not implemented as originally designed. Instead, another program generally occurs in its place. Using a counterfactual means that those two situations, the program as designed (“with”) and the alternative (“with different”), can be compared.

RIE counterfactuals are scenario-based because they model an alternative state, not ask what would have happened if the program had not been implemented. Depending on the goals of the evaluation, however, it can also be informative to include a scenario of the status quo or of whatever would have happened in the absence of the program. An evaluation of a program that is under review may be particularly interested in such a case. Other evaluations may be more interested in comparing multiple possible designs.

The counterfactual also enables the comparison of hypothetical alternative approaches in a program’s design before it is implemented. An example would be having multiple options to inform a policy proposal. Alternatively, an RIE might consider a long-established program against a proposed replacement to determine what the possible effects of the change might be.

To determine an appropriate counterfactual, it can be useful to:

- consult the list of program options developed during the program’s design

- review studies and evaluations of the program

- analyze how the program differs from similar ones that have been proposed or discussed in other publications

- consider what other jurisdictions are doing

The resulting counterfactual may be something that has been implemented elsewhere, or it could be something that has never been implemented. For an evaluation during program design (an ex-ante evaluation), the counterfactuals could two or more options that are being considered. Program stakeholders, subject matter experts, and technical advisors may also have valuable insights into possible counterfactuals.

Importantly, the counterfactual must be feasible, plausible and legal. For example, a program conducted in another jurisdiction may have the same goals. But if it has 10 times the funding allocated or would be illegal in Canada, it would not be a reasonable counterfactual and would not provide a meaningful comparison.

Determining the appropriate counterfactual involves careful consultation with program stakeholders and may require a decision by the evaluator as to what is feasible. For example, if a proposed counterfactual involves a regulatory program instead of an incentive, it may or may not be a feasible alternative, depending on the context.

It is important that the counterfactual be discussed with program stakeholders, especially during Phase 2 of the RIE, when the program summary is developed. Otherwise, some stakeholders may later question the counterfactual and thereby the legitimacy and salience of the evaluation. Without all interests considering the counterfactual, there is the risk that the counterfactual is biased or that it is infeasible because of resource constraints or other limitations.

4.0 Conducting a rapid impact evaluation: a practical guide

It typically takes two to six months to complete an RIE, depending on how the evaluator designs the evaluation, carries out contracting and engages with experts.

This guide describes the four phases of an RIE:

- planning the evaluation

- developing a program summary

- gathering expert assessments of impacts

- analyzing and reporting the resultsFootnote 2

For each phase, this guide presents the steps to follow and discusses the motivation for the steps and the theory behind each. Evaluators are welcome to follow the steps as written or draw on them to develop their own process or methods based on the goals of their evaluation.

4.1 Phase 1: planning for a rapid impact evaluation

| Phase | Purpose |

|---|---|

| 1 | Plan the evaluation, assemble the list of experts and obtain necessary approvals. |

| 2 | Develop the program summary, populate the evaluation framework, and engage technical advisors and key program stakeholders in the evaluation. |

| 3 | Engage with the three groups of experts to gather their assessments of program incremental impacts. |

| 4 | Analyze the data generated in Phase 3, verify the quality of the data provided and report the results. |

Before beginning the evaluation, consider the two key factors that can affect how long the evaluation takes: contracting and method of engagement. Planning should account for these factors in estimating timelines:

- Contracting: Depending on the evaluation, evaluators may choose to contract with technical advisors, subject matter experts or a facilitator for an expert workshop. The time required for contracting should be added to the timeline for the evaluation.

- Method of engagement: The RIE method recommends that subject matter experts in Phase III be consulted through a facilitated workshop, in person or via online conferencing, to ensure that they sufficiently understand the program and concepts being evaluated. The pilot projects took a mixed-method approach, using surveys to engage with subject matter experts. This method has not been tested for reliability in RIE but may be worth considering when appropriate.

Conducting an RIE in only two months is possible when contracting can be done quickly or has been prepared in advance, and when engaging with experts is straightforward. An RIE that takes six months can provide more flexibility in establishing contracts, choosing experts and determining how the experts will be engaged. The time taken for these factors will depend on the questions being asked in the evaluation and on the resources available.

Keeping in mind contracting and the method of engagement, in Phase 1 the evaluator should:

- Determine what will be included in the terms of reference, such as:

- the approach being used

- identification of relevant lines of evidence and methods for collecting data

- the budget for contracting

- timelines

- the evaluation questions (these will likely be refined in the program summary in Phase 2)

- Obtain any necessary approvals, such as for:

- the terms of reference

- the budget

- the proposed approach

- Plan any other lines of evidence that will be used to inform the initial program summary or support the evaluation, such as document review, analysis of existing data, or a literature review

- Identify the experts that will be consulted in the evaluation, as outlined in Table 1:

- for program stakeholders, all interests must be represented

- technical advisors can be useful throughout the evaluation but, depending on the evaluation, some evaluators may not find such an advisor necessary. If a technical advisor will be used, this should be identified in Phase 1

- An RIE relies on the willingness of the various expert groups to engage with the process and is designed to minimize burden on the experts. That said, it may be useful to consider how to adapt your approach to increase the likelihood of engagement and reduce the burden on the experts

- Complete a privacy impact assessment, if appropriate

- If the evaluation will involve contracting experts, begin the contracting process

| Group | Example | Attributes | Engagement process | Main output | Suggested number |

|---|---|---|---|---|---|

| Program stakeholder group | Program beneficiaries, key decision makers, program managers, program staff and delivery partners |

|

Discussion or interviews in Phase 2 Web survey in Phase 3 May need to tailor surveys to different interests |

Analysis of direct outcomes, cost-effectiveness, other evaluation questions or issues | All stakeholder interests represented |

| Subject matter experts | Researchers, academics, industry leaders and others with knowledge of a relevant field (in the pilot, NRCan used a fuel supplier and fleet manager, among others) |

|

Phase 3 only Facilitated workshop (in person or through online conferencing); pilots used interviews and web surveys |

Analysis of direct outcomes, comments on program improvement, program need and relevance | 4 to 6 |

| Technical advisors | Emeritus faculty at a university or an experienced practitioner in a field that is core to the program |

|

Phases 2, 3 and 4 The web survey should be same or similar to that for program stakeholders |

Analysis of direct outcomes, comments on program improvement and program need | 1 to 2 |

The theory of Phase 1

Three main groups are consulted in RIE:

- program stakeholders

- subject matter experts

- technical advisors

Because a key risk facing an evaluation is program-centric bias, the goal is to provide a wide variety of perspectives on program outcomes and impact. If only sources from within the program are used for the program summary or data collection, there may be views or perspectives that are missed. This is why interests are intended to encompass all perspectives on the program, and why external subject matter experts are also asked to assess the program’s impact.

RIE uses external subject matter experts to help offset this bias and improve the legitimacy and credibility of the evaluation assessments. Constraints on time or contracting may necessitate using experts from inside government but who are still outside the department where the program operates. Note that only program stakeholders and technical advisors are consulted in Phase 2 of the RIE to help strengthen the program summary; all three expert groups are engaged in Phase 3 to assess the program’s incremental impact.

Identifying appropriate subject matter experts is an important part of the process. Who these are and how they can best be identified will depend on what is being evaluated. Generally, academia, other government departments and research institutions may all be good places to begin looking for people who have knowledge of the subject but who have not been involved with the program itself.

An important decision in Phase 1 is whether to contract with some of the experts. The earlier experts can be identified and the contracting process (if necessary) can begin, the more rapid the evaluation can be. The best approach will vary by department, but it may be useful to explore what options are available, for example:

- sole-source, low-dollar value contracts

- professional services and pre-qualified suppliers or other pre-approved pools of expertise

- memoranda of understanding

- casual contracts

- any other applicable contracting mechanisms

A relevant model might be how focus groups are engaged in your department.

Program stakeholders are divided into subgroups called interests. Interests should include all individuals or organizations that can either affect the program or are affected by it. Each subgroup has a different interest in the program, and their priorities are shaped by that interest and their perspectives. For example, program stakeholders might divide into three different interests:

- program managers

- program employees

- program beneficiaries

All three are consulted as part of the program stakeholder expert group, but they may have very different perspectives on the program’s impacts. Depending on the program, other interests might include partners for implementation, families of program beneficiaries, senior executives and others.

There is no one correct way of dividing stakeholders into interests. How they are divided will depend on the program being evaluated and the sample size. Evaluators may choose to discuss their division of interests with program stakeholders and look at other pertinent evaluations or literature. A sample of the interest groups should be consulted when the program summary is being developed, and all interest groups should be consulted during data collection. Doing so will help ensure that the program summary is not program-centric or biased because only program employees were consulted.

One particular area of difficulty for the pilot projects was identifying technical advisors. In general, technical advisors are experts in the broader context of the program or in a relevant technical or scientific aspect. They need not be familiar with the program itself. In effect, technical advisors are similar to subject matter experts, but with the expectation they will engage more systematically with the evaluation to help bring relevant technical knowledge into the evaluation’s design and analysis. Such advisors can help the evaluator develop the program summary in Phase 2, including the direct outcomes, theory of change and the scenario-based counterfactual. Technical advisors also play a role as an expert group to assess impact. Since such advisors are often not familiar with the specific program being evaluated in advance, their involvement in the program summary also helps them develop familiarity with it.

What exactly makes for a suitable technical advisor will depend in part on the program being evaluated and the needs of the evaluation. For example, for an evaluation of a fisheries management project, the technical advisor could be an ecologist or a social scientist such as an organizational specialist. The Public Health Agency of Canada used a scientific expert in its pilot project.

Not all evaluations may need a technical advisor, particularly those for which the evaluator already has expertise in the science or specific nature of the program. That said, a technical advisor can be a useful source of triangulation and can often bring insights that would be otherwise missing from the evaluation.

- At the end of Phase 1, you have planned out the evaluation and identified the experts that will be consulted.

4.2 Phase 2: developing the program summary

| Phase | Purpose |

|---|---|

| 1 | Plan the evaluation, assemble the list of experts and obtain necessary approvals. |

| 2 | Develop the program summary, populate the evaluation framework, and engage technical advisors and key program stakeholders in the evaluation. Lines of evidence: a document review, a literature review, and interviews with program stakeholders and other experts. |

| 3 | Engage with the three groups of experts to gather their assessments of program incremental impacts. |

| 4 | Analyze the data generated in Phase 3, verify the quality of the data provided and report the results. |

In Phase 2, evaluators collaborate with program stakeholders and the technical advisor to develop a program summary of two to three pages that has the information necessary for Phase 3. Phase 2 sets the foundation for the rest of the evaluation work, so it often consumes a considerable percentage of the resources and effort involved in the entire evaluation, sometimes as much as 75%. Phase 2 is particularly important for RIE because of the subsequent consultation with experts in Phase 3. The program summary helps ensure that:

- program stakeholders are engaged

- the stakeholders, evaluators and technical advisors understand the program and relevant concepts in the same way

- the evaluation addresses the right questions

Successfully engaging all interests among program stakeholders and the technical advisor helps ensure a high-quality and well-used program summary. The process of developing the summary could involve several consultations with program stakeholders. The program summary should include at a minimum:

- Program profile: A brief description of the program.

- Theory of change: How the program activities and outputs will lead to the relevant outcomes, and how each level of outcome will influence the subsequent level of outcome. The key mechanisms of change should be clearly outlined.

- Direct outcomes and intended impacts: Effects that occur directly as a result of the program, for example, improved confidence due to job training. They may be either immediate outcomes or intermediate outcomes. The process of identifying direct outcomes and intended impacts with the program stakeholders and technical advisor(s) may also help in identifying unintended outcomes (for example, those not identified in the logic model).

- Program stakeholder map: A list of program stakeholders, highlighting what interests they each represent. The map should also include information that will allow stakeholders to be identified for the purposes of the evaluation.

- Time(s) and location(s) of the program and of each effect: Identifying time and location can be particularly important if the program has multiple sites and if implementation, results and stakeholders vary across location or time.

- Scenario-based counterfactual(s): The alternative or alternatives to the program that were implemented and used as a comparison for the incremental impact analysis.

Phase 2 is a good time to engage with the technical advisor to support the development of the scenario-based counterfactual, theory of change and the rest of the program summary.

Key steps in developing the program summary are as follows (conducted in consultation with the technical advisor as necessary):

- Assemble the supporting evidence necessary to inform the program summary from program documentation and secondary sources. Examples are:

- the original memoranda to Cabinet or Treasury Board submissions

- performance information profiles

- previous evaluations

- existing data

- relevant literature

- From the assembled evidence, develop draft summaries (one to two pages) of all activities of the program, including outputs, outcomes, purpose and relevant stakeholders.

- Identify or develop the theory of change.

- Create impact statements (if they do not already exist).

- Develop the scenario-based counterfactual(s). This is one of the most important steps in the evaluation and should be done carefully. See section 3 for details.

- Develop interview guides and/or surveys for step 7.

- Conduct interviews with all or a sample of program stakeholders, and work with the technical advisor to obtain feedback on the summary documents and counterfactual(s). Interviews may be in person or by telephone. It is important to note that:

- Particular emphasis should be given to confirming the validity of the scenario-based counterfactual(s) and to ensuring that it is plausible, feasible and legal.

- It may be practical to engage with only some program stakeholders during this phase rather than consulting with all of them. The demands on experts who present a scheduling challenge can be restricted to Phase 3, simplifying engagement requirements. If possible, however, all interests should be engaged.

- Incorporate feedback from all three expert groups and, if necessary, consult with them again in order to achieve consensus on all elements of the program summary.

An example of the program summary for NRCan’s evaluation is available.

The theory of Phase 2

The program summary is quite different from the standard program profile developed in evaluations, as it focuses more on how the program is actually delivered in practice. It is both a product and a process.

As a product, the summary provides the basic building blocks for the evaluation, which are:

- the basis to define the impacts

- the interests involved with the intervention

- the theory of change

- the counterfactual(s)

The program summary is a key part of designing the evaluation, and also helps the RIE be “rapid.”

As a process, the development of the summary helps engage key interests in the evaluation. This engagement helps build legitimacy for the evaluation, promotes high-quality responses to the survey(s) and increases the likelihood the evaluation will be used after it is complete. The summary also helps refine the evaluation questions and impacts so that they are relevant to all interests and provide insights into the program’s causal mechanisms. The summary also confirms or identifies the indicators being measured to respond to the evaluation questions.

The evaluator in Phase 2 consults with two of the three groups of experts involved in an RIE:

- program stakeholders

- technical experts

This consultation will ensure that the evaluation and its questions are developed with a complete understanding of the program. The perspectives of subject matter experts are less critical because they are less familiar with the program. Technical advisors are consulted in this phase to help provide context and background on any scientific or specialist knowledge relevant to the evaluation.

Depending on what was decided in Phase 1, engagement with experts can be done through individual or group interviews (in person or by phone) or by sending out the material and requesting their feedback. Interviews need not be long but should seek to confirm that the summary is a reasonable representation of the program or of where changes are needed. Stakeholders may also have suggestions or contributions to make in developing the counterfactual. Where changes are suggested, including unintended outcomes, these should be incorporated into a revised summary that is recirculated and discussed.

Some program stakeholders and technical advisors may be consulted twice:

- in Phase 2 to help develop the program summary

- in Phase 3 to assess program impacts

In the pilot projects, stakeholders were generally open to being consulted twice. It may be useful to inform stakeholders in Phase 2 of the planned second consultation and clarify the goals of each consultation.

Particular emphasis should be given to confirming the validity of the scenario-based counterfactual and ensuring that it represents a plausible, feasible and legal counterfactual. The counterfactual may not be the preferred option for all the key stakeholders, but all should find it acceptable.

Evaluations must also balance the need to consult with the need to proceed with the evaluation. Evaluators should do their best to ensure that everyone consulted agrees with what is developed, particularly the counterfactual. In some cases, it may be necessary to recognize disagreements and continue with the evaluation, highlighting those disagreements in the report as necessary.

A key part of the program summary is the focus on impacts and outcomes. Briefly, direct outcomes are the changes that have directly occurred as a result of a program. A skills-training program might help people demonstrate increased confidence, for example, or help them find jobs. Impacts are more long-term or high-level effects, and incremental impacts are the difference between what happened with the intervention and what would have happened in the counterfactual.

The goal in developing the program summary is to ensure that all possible outcomes, including ones that may not have appeared in the original logic model or in a Treasury Board submission, are addressed. Experts may identify some unintended outcomes that are not present in the logic model, which can help inform later phases of the evaluation. Such identification and exploration of unintended outcomes can be an important benefit of an RIE.

An approach taken in one of the pilot projects was to provide a list of all the immediate and intermediate outcomes in the program’s logic model to stakeholders and ask them if:

- they expected the program to achieve those outcomes

- there were any other outcomes they had observed or expected to observe that were not listed

Providing such a list and asking these questions can make it easier for experts consulted to provide comments. The evaluation can then discuss any outcomes that stakeholders believed had not been achieved and provide a clear list of unintended outcomes, which can then be explored more fully during the evaluation.

Finally, the program summary is also where the theory of change is developed, if the program does not already have one. As with any theory of change, it should help explain how the program’s activities and outputs will lead to the relevant outcomes, and how each level of outcome will influence the subsequent level of outcome. Theories of change can be useful in any evaluation by pinning down the logic that motivates the program and how the program’s activities lead to its outcomes.

A theory of change is particularly important in RIE because experts will be asked to assess the probability and magnitude of the impacts of the program, and their knowledge and understanding of the program logic will play a key role. Ensuring there is consensus on the program logic and the key mechanisms of change during the development of the program summary will help avoid the problem of different experts assuming that there are different mechanisms of change.

An example of the theory of change for NRCan’s evaluation is available online.

If an RIE is conducted as a line of evidence in a larger evaluation, Phase 2 may be a good time to prepare other parts of the evaluation. For example, if the evaluation will also look at alternative delivery methods or improving process or implementation, it may be useful to engage with experts about those subjects as well.

- At the end of Phase 2, you have completed the program summary, including a counterfactual, and have agreement among the key stakeholders on its relevance.

4.3 Phase 3: gathering assessments of program incremental impact

| Phase | Purpose |

|---|---|

| 1 | Plan the evaluation, assemble the list of experts and obtain necessary approvals. |

| 2 | Develop the program summary, populate the evaluation framework, and engage technical advisors and key program stakeholders in the evaluation. |

| 3 | Engage with the three groups of experts to gather their assessments of program incremental impacts Lines of evidence: surveys, facilitated workshop and interviews |

| 4 | Analyze the data generated in Phase 3, verify the quality of the data provided and report the results. |

Having identified your three groups of experts in Phase 1 (program stakeholders, subject matter experts and technical advisors), each group must now be consulted for their assessment of incremental impacts.

The three steps in consulting with the expert groups are as follows:

- Identify the key information needed from each group of experts:

- All three groups of experts are asked to assess the effects of the program and the effects of the counterfactual. To do so, they are asked to estimate two things: the probability the intervention will have the desired outcome, and, given it does affect the outcome, the magnitude of the effect. These estimates typically use a scale of 0 to 4.

- Experts should be asked about the probability and magnitude of each of the direct outcomes set out in the program summary.

- If desired, include other questions relevant to the evaluation, such as about program cost-effectiveness or ways the program could be improved to better achieve public policy goals and the program’s objectives. From a theory of change perspective, it might also be useful to ask experts to assess the importance of the activities and outputs relative to the immediate outcomes.

- Based on the time available and the decision in Phase 1 on how to engage with experts, prepare the relevant materials:

- It may be helpful to tailor surveys to different expert groups and different interests within the program stakeholder group. This is particularly true for respondents who are external to the department. Such respondents may have less familiarity with the evaluation process and objectives, and will need more background and contextual information relative to internal stakeholders.

- Gather the assessments of probability and magnitude from all experts in the three expert groups:

- For example, an expert might estimate that a program is highly likely to have an effect on an outcome (3 out of 4 perhaps), but that effect is likely to be quite small (1 out of 4). With those two numbers, the evaluator can calculate the expert’s assessment of the program’s outcomes, as detailed in Phase 4.

Example from NRCan’s ecoENERGY for Alternative Fuels program

The following example was used by the pilot project at Natural Resources Canada (NRCan) to assess the probability and magnitude of each of the direct outcomes as identified in Phase 1. After respondents had assessed probability and magnitude for each outcome for the actual program, the counterfactual was explained to them, and they answered the same questions with respect to the counterfactual.

Please indicate the extent to which you believe NRCan’s ecoENERGY for Alternative Fuels program has resulted, or will result in the following outcomes in terms of:

- The likelihood that the outcome occurred or will occur, using a scale of 0 to 4, where 0 means has not or will not occur, and 4 means has or definitely will occur;Footnote 3 and

- What the size of the outcome is or will be, using a scale of 0 to 4, where 0 means none and 4 means large.

The purpose of this question is to assess the extent to which the program has led or will lead to the outcomes (i.e. attribution of outcomes to the program) and the magnitude (or potential magnitude) of the outcomes. It is essential that you rate both columns.

| Outcome | Probability What is the likelihood the outcome occurred or will occur? |

Magnitude What is, or will be, the size of the outcome if it does occur? |

|---|---|---|

| Enhanced knowledge within the standards community to harmonize/align and update the codes and standards | ||

| More harmonized codes and standards for alternative fuels, particularly natural gas in medium- and heavy-duty vehicles (including refuelling infrastructure) | ||

| Increased efficiencies in deploying natural gas vehicles | ||

| Increased knowledge by policy makers of alternative fuel pathways, particularly natural gas | ||

| Increased knowledge by fuel producers or distributors of alternative fuel pathways, particularly natural gas | ||

| Increased knowledge by fuel users of alternative fuel pathways, particularly natural gas | ||

| Increased knowledge of investors of alternative fuel pathways, particularly natural gas | ||

| Increased awareness by end-users of the benefits of alternative fuel options, particularly natural gas | ||

| Increased awareness by fuel producers or distributors of the benefits of alternative fuel options, particularly natural gas | ||

| Increased awareness among vehicle or equipment manufacturers of the benefits of alternative fuel options, particularly natural gas | ||

| Energy consumers adopt energy efficient technologies (i.e. related to natural gas) | ||

| Energy consumers adopt alternative energy practices, particularly natural gas |

The theory of Phase 3

Phase 3 of an RIE begins the engagement with external subject matter experts. Using external experts helps offset program-centric bias in the assessment of impacts, and helps improve the legitimacy and credibility of the evaluation assessments. In some cases, it may be sufficient to use experts from inside government, although at minimum the experts should be outside the department where the program operates. In some evaluations, including in one of the pilot projects, the external perspective was very different from the other groups of respondents. Such perspectives can suggest a potentially interesting further line of inquiry or area for research for the evaluation.

Program stakeholders and technical advisors can be engaged through a web survey or interviews to obtain their assessment of impact. Some flexibility may be required, depending on who is being consulted. For example, in consulting with stakeholders, some senior managers may find it easier to respond in an interview rather than a survey due to time constraints.

The RIE method recommends that subject matter experts be engaged through a facilitated workshop, either in person or via tools such as online conferencing. Workshops can be valuable because they provide an opportunity to ensure that external experts understand both the program and the counterfactual, increasing the validity of the evaluation. Workshops can also allow for interaction between subject matter experts, helping clarify areas of concern or confusion. However, workshops may also create an opportunity for some experts to affect the decisions of others. The facilitator should therefore ensure that such influence does not occur, and that each expert provides their own assessment of the probability and magnitude of each impact, informed but not determined by the discussion with others.

All three federal government pilot projects used surveys instead of workshops to engage subject matter experts because of timing and logistical constraints. Using surveys has not been tested in the way that workshops (both online and in person) have, and so may add a further variable to the evaluation. Future evaluations within government should consider what suits the evaluation best. In order to ensure that the consultations are comparable, however, the same questions should be asked of all experts, even if the method varies.

It can also be useful to ask experts to assess how important each outcome is to the overall effect of the program. Understanding how experts assess the relative importance of different outcomes can be useful in interpreting and understanding the evaluation findings. If the evaluation decides to ask for experts’ assessments, these should be asked for in Phase 3 to avoid having to consult with experts again in Phase 4. For example, experts could be asked how important each immediate outcome is to the intermediate outcomes, and how important each intermediate outcome was to the ultimate outcomes. If the evaluation plans to assess overall impact, as discussed in Phase 4, such assessments can help inform that process.

At the end of Phase 3, you should have:

- expert assessments of the probability and magnitude of effects on direct outcomes from the program, and under the scenario-based counterfactual

- an assessment of the other evaluation issues (for example, relevance, effectiveness, efficiency and economy) and suggestions for program improvement, as desired

4.4 Phase 4: analyzing and reporting results

| Phase | Purpose |

|---|---|

| 1 | Plan the evaluation, assemble the list of experts and obtain necessary approvals. |

| 2 | Develop the program summary, populate the evaluation framework, and engage technical advisors and key program stakeholders in the evaluation. |

| 3 | Engage with the three groups of experts to gather their assessments of program incremental impacts. |

| 4 | Analyze the data generated in Phase 3, verify the quality of the data provided and report the results. |

Analysis

The RIE analysis can be performed using a spreadsheet and does not require advanced statistical tools. Although there are a number of steps, the RIE analysis is not intended to be complicated. In general, the three goals are as follows:

- Calculate the mean assessment of probability and magnitude for each expert group and for the subgroup that represents each stakeholder interest for program stakeholders.Footnote 4

- Calculate the incremental impact attributable to the program assessed by each expert group (the difference between outcomes under the program and the counterfactual).

- Calculate an overall impact for the program.

Calculate the mean assessment of probability and magnitude for each expert group

- Assemble information from the surveys, the facilitated workshop, interviews and any other quantitative data-gathering methods in a spreadsheet and classify responses by the expert group or stakeholder subgroup they represent. Among other information, you should have from each expert an estimate of the probability of the program having a given outcome and the magnitude effect if it occurs.

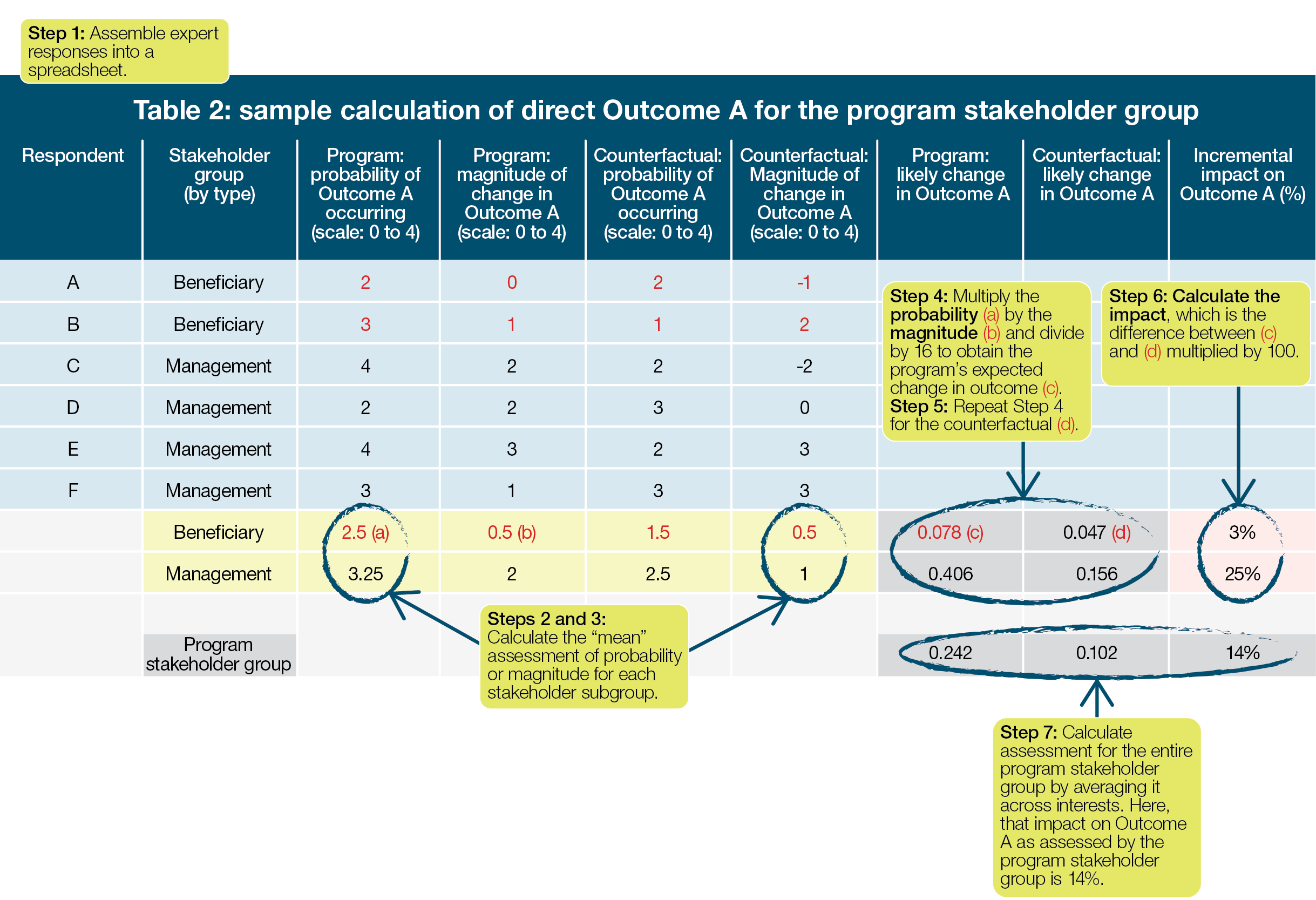

- For subject matter experts and technical advisors, find for each group and each outcome the mean estimate of probability and mean estimate of magnitude (see Table 2).

- For program stakeholders, calculate the mean responses by subgroup or interest. This is done to ensure that the assessment of outcomes of any one subgroup does not outweigh that of other subgroups based on sample size (see Table 2).Footnote 5

You now have a mean of probability and magnitude for each outcome from the following:

- subject matter experts

- technical advisors and

- each subgroup of the program stakeholders

Impact attributable to the program (Table 2)

- For each group or subgroup, calculate the expected change in each direct outcome under the program. The expected change will always be a value between 0 and 1.

- To calculate the expected change in a direct outcome, multiply the assessed probability of the outcome by the assessed magnitude of the outcome. The result can then be normalized to between 0 and 1 by dividing it by the maximum score allowed by the scales. On a scale of 0 to 4, the maximum score is 16; on a 10 point scale, the maximum score would be 100.

- Thus, if for Outcome A subject matter experts estimated the probability of Outcome A occurring to be 3 out of 4 and the magnitude if it did occur to be 2 out of 4, the expected change in Outcome A would be (3 × 2)/16 = 0.375.

- Repeat the calculations in step 4 to obtain the expected change in each outcome under the scenario-based counterfactual, or under each counterfactual if using more than one.

- Calculate the incremental impact or marginal change in each outcome by subtracting the expected change in the outcome under the counterfactual from the expected change in the outcome under the program. The result is an estimated percentage change in the outcome that is attributed to the program, as assessed by that expert group: the program’s incremental impact with respect to that outcome.

- For example, if the expected change in Outcome A from the program was 0.375 and the expected change in Outcome A from the counterfactual was 0.2, the incremental impact of the program on Outcome A is 17.5% (0.375 - 0.2).

- In Table 2, the assessed incremental impact of the program on the outcome is 3% and 25% for the program beneficiary group and program management group, respectively.

- It is also useful to calculate the incremental impact on each outcome assessed by each individual respondent, particularly individual subject matter experts, by:

- multiplying their assessed probability and magnitude

- dividing by the maximum score allowed

- taking the difference between the program and counterfactual, as above

You can then compare the assessments of individual experts to each other and the assessments of other groups, making it easier to identify trends and outliers in the data.

- Finally, to obtain an assessment from the program stakeholder group as a whole, calculate the incremental impact on each outcome for all subgroups.

At the conclusion of these calculations, you will have assessments of the program’s incremental impact on each direct outcome from each of the three expert groups, in other words, a range of the program’s likely impacts.

Table 2 provides an example of the calculation of two stakeholder subgroups’ (program beneficiaries and program management) assessment of incremental impact on Outcome A. A similar calculation would also be done for all other outcomes and expert groups. Using mean responses allows equal weighting of the program manager subgroup and the beneficiary subgroup, even though more program managers were consulted. Subject matter experts and technical advisors need not be divided into subgroups but otherwise are calculated similarly.

Table 2 - Text version

Table 2 presents a sample calculation of direct Outcome A for the program stakeholder group. It presents information for respondents A through F, including their stakeholder group; and their estimate of the probability of and magnitude of change in Outcome A occurring under the Program and the counterfactual.

It then presents seven steps for calculating the program stakeholder group’s assessment of incremental impact. First, the information is placed in the spreadsheet. Second and third, the mean assessment of probability and magnitude is calculated for each stakeholder subgroup. Fourth, the probability and magnitude are multiplied together and divided by 16 to get the program’s expected change in outcome C. Fifth, Step 4 is repeated for the counterfactual. Sixth, the impact is calculated by finding the difference between the program’s expected change in outcome and the counterfactual’s. Finally, the entire stakeholder group’s assessment is calculated by averaging it across interests. In this case, the incremental impact is assessed as 14%.

- Optional: calculate overall impact

- Drawing on the literature, discussions with experts in Phase 3, the technical advisor or other sources, assign a weight to each outcome based on its estimated contribution to program incremental impacts. The weights assigned to each outcome should sum up to 1.0, or 100%. In the simplest case, weights may be equal across all outcomes.

- For subject matter experts, calculate the contribution of each incremental impact to the overall impact by multiplying the impact for each outcome by the assigned weight.

- Calculate the estimated overall impact by summing the contribution of the incremental impacts generated in step (b).

- Repeat steps (b) and (c) for the other two expert groups.

The calculation described in step 8 provides an estimate for each expert group of their assessment of the program’s contribution to overall impacts in percentage terms. For example, consider a simplified version of the NRCan pilot, with only three outcomes:

- enhancing knowledge in the standards community

- more harmonized codes and standards for alternative fuels

- increased efficiencies in deploying natural gas vehicles

The RIE finds that subject matter experts assess the program’s incremental impact on each outcome as 10%, 15% and 20%, respectively. However, the evaluator might conclude after reviewing the literature and speaking to the experts that for contribution to overall impact, the first two outcomes were equally important, but the third outcome was only half as important as the first two. The weights would then be 40%, 40% and 20%, and the overall impact as estimated by subject matter experts would be 0.4 × 0.1 + 0.4 × 0.15 + 0.2 × 0.2 = 0.14 (or 14%). (For the full NRCan example, see Annex C of NRCan’s Evaluation Report: EcoENERGY for Alternative Fuels Program).

Choosing a weight

There is no single correct weighting for calculation of overall impact. Determining weights should draw on the scientific literature, and it may also be helpful to work with the three expert groups in identifying the appropriate weights of the outcomes. Regardless of how the weights are calculated. It is important that the evaluation be transparent about them and clearly document and communicate their rationale.

Table 3 provides the assessment of overall impact for a single expert group for a program with four outcomes (Outcomes A to D). Outcome A, whose incremental impact is estimated at 0.14 in Table 2, is assigned a weight of 0.2 in comparison with Outcome B, which is assigned a weight of 0.4. This means that Outcome B is assessed to be twice as important as Outcome A in terms of its contribution to the larger program impact. The overall impact is 0.1625 (or 16%).

- Optional: verify analysis

- A useful step in any evaluation is to verify the validity and reliability of the information obtained. Such verification can be done statistically and by comparing the findings of the RIE to other evaluations of the same or similar programs.

- Report

- You can now report the findings of the evaluation for each of the direct outcomes and impacts identified. For example, subject matter experts might assess that the program enhanced knowledge within the standards community by 30% more than an alternative delivery option.

| Incremental impact on each outcome, as estimated by an expert group | Weight of outcome | Contribution to overall impact | |

|---|---|---|---|

| Outcome A | 0.14 | 0.2 | 0.028 (0.14 × 0.2) |

| Outcome B | 0.23 | 0.4 | 0.092 (0.23 × 0.4) |

| Outcome C | 0.09 | 0.3 | 0.027 (0.09 × 0.3) |

| Outcome D | 0.155 | 0.1 | 0.0155 (0.155 × 0.1) |

| Impact | 1.0 | 0.1625 (0.028 + 0.092 + 0.027 + 0.0155) Overall impact is 16% |

The Theory of Phase 4

Optional: overall impact

An optional step in the analysis is to calculate the overall impact of the program. The simplest method would be to take the mean impact on all outcomes. However, there might also be a justification for weighting impacts differently if some are expected to contribute more to the overall impact than others.

Depending on the question the evaluation is trying to answer, this step may not be necessary. If outcomes are diverse or the evaluation is interested in incremental impacts on specific outcomes, not in general, then incremental impact on each outcome may be what is of interest. This approach also avoids the need to pick a weighting for each outcome, which may be difficult.

Verification

A useful step in any evaluation is to verify the validity and reliability of the information obtained. Since RIE consults three expert groups, it is particularly useful to check whether the three groups are consistent (inter-rater reliability). Cronbach’s alpha is a standard test for internal reliability and can be computed in statistical packages such as SPSS, Stata or R. It is typically not straightforward to do so in the basic Excel package, however.

The evaluator might also check whether RIE estimates are in line with those from other evaluations done of the program or studies of similar programs. Comparing estimates could be done statistically or through a literature review or background research. For example, finding that all previous impact evaluations of a program had found one thing, and that the RIE had found another, would suggest that more research was needed to identify the reason for the difference. Evaluators might start by checking whether the three expert groups varied in whether they disagreed with past findings, and whether the differences were due to methodology, timing or other factors. Past RIEs have typically found that experts from within the program are more optimistic than external ones, which might explain the differences if past evaluations relied more heavily on information from within the program.

Reporting

One of RIE’s strengths is to report a clear and concise summary of what experts assess to be the program’s impact, as well as the potential impact of one or more alternatives. Senior executives and program management are then able to reflect on results in the context of alternatives and discuss options for program improvement directly. Such reflection is particularly important when assessing program effectiveness, but may also be informative when discussing program efficiency relative to possible alternatives.

Providing evaluation readers with this information requires a clear explanation of what information the RIE has provided. In brief, the process outlined above allows the reporting of several key elements:

- a program summary, including the intended and unintended outcomes of the program, which external experts and program stakeholders agree is a reasonable reflection of the program

- a measure of the effect of the program and the counterfactual(s) on each outcome as assessed by the expert groups

- the impact of the program relative to some feasible and plausible alternative(s). that is, what happened due to the program that would not under the alternative

- optional: a measure of the overall impact of the program

Findings are typically best reported as percentages. For example, subject matter experts might assess that the program enhanced knowledge within the standards community by 30% more than an alternative delivery option. Program stakeholders might assess an impact of only 20%, and technical advisors, 25%. Overall, therefore, experts assessed that the program enhanced knowledge within the standards community by 20% to 30% more than the alternative.

If the overall impact has been calculated, for transparency this is also best reported as a range. If the three expert groups assessed overall impacts of 11%, 14% and 16%, the evaluation can report that the program is expected to provide an overall improvement in outcomes of between 11% and 16% more than the alternatives considered. Although it is possible to calculate a single average for the three groups, such a calculation can be misleading if the three groups provided a wide range of assessments. A range is typically preferable.

In some cases, there may be systematic differences between the estimates of each group of experts, which can suggest interesting areas for further analysis or discussion. For example, subject matter experts might be significantly less optimistic about the program’s impacts than internal program stakeholders. Such a perspective might have been missed by an evaluation that did not seek external perspectives and can highlight important issues. Internal stakeholders might be missing some negative unintended consequences, for example, or not be aware of a similar program elsewhere that performed poorly. Equally, internal stakeholders might have access to additional information that supports their optimism but that has not been well communicated or researched. Such findings can be of interest to management and suggest areas for further exploration and evaluation.

5.0 Advantages and challenges of rapid impact evaluation

Like any method, aside from the learning curve, RIE has both strengths and limitations.

Advantages

- Impact through a counterfactual: RIE provides a clear assessment of impacts, which can be used to reinforce and explore other areas evaluated and inform reporting and analysis. It was interesting in the pilot projects to observe the quantification of different perspectives from the expert group, which allowed the evaluation to explore why there may have been differences when they were observed.

- Control for bias: By including all areas of interest in the program stakeholder group, as well as experts completely external to the program, RIE controls for bias. A particular advantage is the inclusion of external expert evidence that is often not included in evaluations but can be useful to inform evaluation issues related to relevance, effectiveness and impact (or potential impact).

- Participatory process: RIE focuses on engaging all stakeholders in the evaluation. External program stakeholders in one pilot, for example, commented that they appreciated seeing the full picture of what the program was trying to do as they had participated in only one aspect. Developing the program summary supports this process, helping engage all stakeholders and develop the basis for the rest of the evaluation.

- Complementary findings: RIE provides an alternative line of evidence that can complement other methods used in the evaluation, allowing for triangulation and increased confidence in evaluation results.

- (Relatively) fast and flexible: Because of its relative speed and flexibility as well as the use of the counterfactual, RIE is well placed to be conducted during program design, helping inform managers of the costs and benefits of different options. One of the pilot projects highlighted that it was particularly useful because as the evaluation was conducted, program managers were considering program renewal, and RIE helped speak to that issue. RIE can also be conducted ex-post.

Challenges

- Expert assessments: RIE relies on expert assessments of impact rather than the observation and collection of data on program performance directly, which may not be sufficient on its own to satisfy the needs of management and public reporting.

- Technical advisors: RIE requires evaluators to identify appropriate subject matter experts and technical advisors external to the program. This can sometimes be a challenge, and it may take several conversations with colleagues to identify someone who would be appropriate and add value.

- Counterfactuals: RIE hinges on having a good counterfactual. For evaluators who are less familiar with these tools, particularly the first time they conduct an RIE, there may be a learning period. It is important to spend time getting it right.

- Complex contexts: Some contexts may be impractical for an RIE, particularly if a program is too complex for a compelling counterfactual to be developed or for experts to reasonably assess program impacts.

- Reporting impact: It is important that the reader of the evaluation be able to clearly understand what is being reported when identifying the impact of the program. Having another evaluator, ideally someone who is not too familiar with RIE, review the evaluation report can be helpful. Feedback and questions from the program on the draft report can also help identify where more clarity is needed.

6.0 Conclusions

RIE can be an important addition to an evaluator’s toolbox. Like all evaluation methods, it is not appropriate everywhere, and neither can it answer every question. But it can provide clear insights into how experts assess a program’s impact and support the engagement of a wide variety of perspectives and viewpoints, both internal and external. Whether as a full approach or as a part of a broader mixed-method evaluation, evaluators are encouraged to consider the use of RIE and other methods to support their evaluations.

For more information

For more information on evaluations and related topics, visit the Centre of Excellence for Evaluation section of the Treasury Board of Canada Secretariat website. Also see the Policy on Results.

Readers can also contact the following:

Results Division

Expenditure Management Sector

Treasury Board of Canada Secretariat

Email: results-resultats@tbs-sct.gc.ca

Appendix A: rapid impact evaluation flow chart

Figure 01 - Text version

Figure 1 is a flow chart of the four phases of a rapid impact evaluation.

In Phase 1, the evaluation is planned, including obtaining the necessary approvals, identifying experts to be consulted, and beginning the contracting process.

In Phase 2, a program summary is developed.

In Phase 3, experts are consulted on the probability and magnitude of each outcome relative to a scenario-based counterfactual.

In Phase 4, the expert assessments are used to estimate the program’s overall impact, and the results of the evaluation are reported.

Appendix B: the pilot project

This appendix details the process of conducting an RIE in each of the three pilot departments, as well as some general thoughts and observations based on that process. Note that as conducted in the pilot, RIE included only three phases, and so some of the information below has been adjusted to align with the rest of the guide.

Evaluation reports

- Evaluation of the Public Health Agency of Canada’s Non-Enteric Zoonotic Infectious Disease Activities 2010-2011 to 2015-2016

- Evaluation Report: EcoENERGY for Alternative Fuels Program

- Counterfactual

- Annex A: Program Logic Model and Theory of Change

- Annex B: Program Summary

- Annex C: Calculation of Net Impact

- 2015-2016 Evaluation of the Kanishka Project Research Initiative

RIE process as implemented in the pilot

Public Health Agency of Canada (PHAC)

| Phase | Timeline | Process |

|---|---|---|

| Phase 2: program summary | October to November |

|

| November and December |

|

|

| Phase 3: expert assessment | November and December |

|

| Phase 4: analysis, verification and reporting | December |

|

| January and February |

|

Natural Resources Canada (NRCan)

| Phase | Process | Level of effort |

|---|---|---|

| Phase 1: planning |

|

3 to 5 days |

| Phase 2: program summary |

|

10 to 15 days |

| Phase 3: gathering Information |

|

3 to 4 weeks1, 2 |

| Phase 4: analyzing and reporting results |

|

5 to 10 days4 |

Notes

- Phase 3 included:

- preparation of the data collection tools (surveys, interview guide, documentation for consultations with subject matter experts), including translation and survey programming (level of effort: 5 to 7 days)

- conducting the survey, interviews and subject matter expert consultations (level of effort: 2 to 3 weeks)

- Three to four weeks is the RIE-specific part of this (surveys and consultations with subject matter experts) and the literature review. The document review and data analysis actually started shortly after completion of the methodology report in Phase 1.

- As part of the pilot, the role of the technical advisor in RIE was confirmed late in Phase 2. Despite best efforts, timing did not enable the inclusion of a technical advisor in this evaluation.

- This did not include internal departmental review and approval processes, translation, HTML conversion and web posting.

The draft evaluation report was then provided to the program for review and comment, along with a request to start drafting their Management Response and Action Plan. The draft report was finalized based on feedback (where appropriate) from the program in preparation for Departmental Evaluation Committee.

Public Safety Canada (PS)

| May to July 2015 |

|

|---|---|

| August to September 2015 |

|

| October to December 2015 |

|

|---|

| January 2016 |

|

|---|---|

| February to April 2016 |

|

| May to July 2016 |

|

Summary of departmental experiences

The pilot project was not without challenges. Several departments noted that during the pilot, certain aspects of the evaluation design, and particularly the attempt to fit into the RIE framework, were done somewhat too quickly and with relatively little guidance available. As a result, there was not enough time to get a better understanding and appreciation for some of the RIE steps and requirements.

That said, participants felt that RIE was a good addition to current approaches and methods in evaluation, particularly for ex-ante and mixed-method evaluations, and those supporting program renewal. Quantitative assessments from experts can help add validity and credibility to evaluation findings. Its use as an evaluation methodology may be somewhat limited, however, due to its reliance on expert perception and the potential difficulty in developing a counterfactual in complex contexts. Departments should consider carefully where to apply the approach, as it may not be applicable to all programs.

Some lessons learned

A few key lessons were learned during the pilot projects:

- It is important to understand the methods and phases of RIE and how they align with the evaluation questions being addressed.

- As the roadmap for the evaluation, the evaluation matrix was one of the most important reference tools to guide the evaluation team and was referred to continually throughout.

- The theory of change was not explicitly shared with program stakeholders so as to not overwhelm them with evaluation jargon or process. Instead, elements of this were incorporated into the consultation guide.

- Taking rating questions directly from the survey and including them in the interviews with program management was highly effective and enabled data for key questions (for example, importance, likelihood and magnitude) to be combined with survey data.

- It is important to tailor the survey to program stakeholder interests as much as possible. In the pilot projects, although the survey was tailored (through skips or different survey instruments), the external program stakeholder survey still ended up being too long and some questions were not relevant to some stakeholder groups. Because this is the primary data collection method, it is important to ensure that key questions are asked to all program stakeholders, but it may be possible to have variations on some of the additional questions to address core evaluation issues (for example, the extent to which needs are being met).

© Her Majesty the Queen in Right of Canada, represented by the President of the Treasury Board, 2017,

ISSN: 978-0-660-09329-1